Career centers rarely struggle because staff are unwilling to learn. They struggle because professional development is often treated as a training calendar, not a system for improving service quality.

That matters institutionally because students experience career services through the consistency of everyday interactions: resume feedback, workshops, employer conversations, referrals, and follow-up.

When staff development is not reinforced through shared standards, role-based learning, calibration, and measurement, service quality varies across the office.

This guide explains how career centers can build a stronger professional development model for staff, including the capabilities to prioritize, how to segment learning by role and experience level, how to design a year-round PD cycle, and how to measure whether development is actually improving student-facing service.

Why one-off training rarely changes advising quality?

One-off training rarely changes advising quality because it improves awareness faster than it changes behavior. Advisors may leave a session with new language or a new tool, but without reinforcement, calibration, and workflow integration, the team returns to prior habits.

Most directors have seen this pattern. A campus brings in a speaker on employer engagement, AI-assisted resume review, or inclusive advising. The session gets strong satisfaction comments.

Three weeks later, advising notes, document feedback, and workshop delivery look largely unchanged.

The problem usually isn't the content. It's the delivery architecture. Advising is a judgment-heavy practice, and judgment changes through repetition, observation, and correction.

What actually breaks after the workshop

A single event doesn't answer the operational questions that drive service quality:

- How should advisors use the new method: In appointments, workshops, drop-ins, or asynchronous review.

- What counts as strong execution: Shared rubrics, sample cases, and observed behaviors.

- Who checks consistency: A supervisor, a peer cohort, or a practice lead.

- What gets retired: Legacy scripts, outdated handouts, or duplicate workflows.

When those decisions stay unresolved, teams improvise. Improvisation creates uneven student experiences across advisors, colleges, and appointment types.

Practical rule: If a new training topic doesn't change a rubric, workflow, dashboard, or supervision practice, it probably won't change advising quality for long.

This matters more in career services than many units admit. Students often experience the office through one appointment, one resume review, or one workshop.

If staff apply different standards, students don't see “professional discretion.” They see inconsistency.

A better model treats PD as a service-quality system. Training introduces a method. Team leads then embed it in observation, case review, templates, and routine feedback.

That's when learning starts to affect the student-facing work.

Also Read: How can advisors use a self-assessment toolkit to become strategic, AI-ready career center professionals?

Which capabilities career services staff need most right now?

Career services staff need a tighter capability mix: data literacy, AI tool fluency, consultative employer engagement, equity-centered outreach, and operational consistency. These capabilities matter because career centers are no longer judged only by how many appointments they complete. They are increasingly expected to support more students, coordinate across academic units, use technology responsibly, and show evidence that services are improving readiness and outcomes.

A professional man in a suit surrounded by icons representing data literacy, tech integration, industry connections, and empathy.

According to the U.S. Bureau of Labor Statistics profile for training and development specialists, employment in that category is projected to grow 11% from 2024 to 2034, and 44% of workers' skills are expected to be disrupted in the next five years.

The same verified data set also notes that over one-third of career services professionals report slower-than-expected career progression.

That combination has two implications for directors. First, staff development has to keep pace with labor-market shifts, employer expectations, and technology change.

Second, career centers need visible internal growth pathways so staff can build deeper expertise without having to leave the function to advance.

The capabilities worth prioritizing

- Data literacy: Staff should be able to interpret appointment demand, student engagement patterns, cohort gaps, workshop performance, employer activity, and tool adoption. This is not institutional research work. It is the ability to read service signals and adjust operations accordingly.

- AI tool fluency: Staff need to evaluate AI outputs, teach students how to use AI responsibly, and understand where automation can support resume review, mock interview preparation, student nudges, and administrative workflows without weakening judgment or privacy standards.

- Consultative employer engagement: Employer relations staff need stronger discovery, account management, and labor-market translation skills. The work increasingly depends on understanding employer needs, communicating student talent clearly, and connecting hiring patterns back to academic and student-preparation strategy.

- Equity-centered outreach: The uConnect article on improving equity and access through career center websites points to a hard truth: students who most need career services often are not the ones walking through the doors. Staff need practice in outreach design, identity-specific resource development, inclusive communication, and service models that reach students before they self-select into support.

- Operational consistency: Career centers need shared standards for how staff document appointments, review student materials, run workshops, communicate with employers, escalate complex cases, and use technology. Without shared operating habits, students can receive very different levels of support depending on who they meet.

Northeastern University is a useful reference point because its career and co-op model connects student advising, employer engagement, and outcome visibility.

That kind of environment rewards staff who can read patterns across students, employers, and programs rather than working only within isolated service tasks.

For teams revisiting student readiness expectations, our guide to NACE career readiness competencies is useful as a student-facing competency reference.

The staff development question is broader: what capabilities help the entire career services team teach, assess, support, and scale those competencies consistently?

Strong professional development now has to cover both service judgment and tool judgment.

Career center teams need to know what high-quality support looks like, how to apply it across different service formats, and when technology strengthens or weakens the student experience.

How to segment staff development by role and experience level?

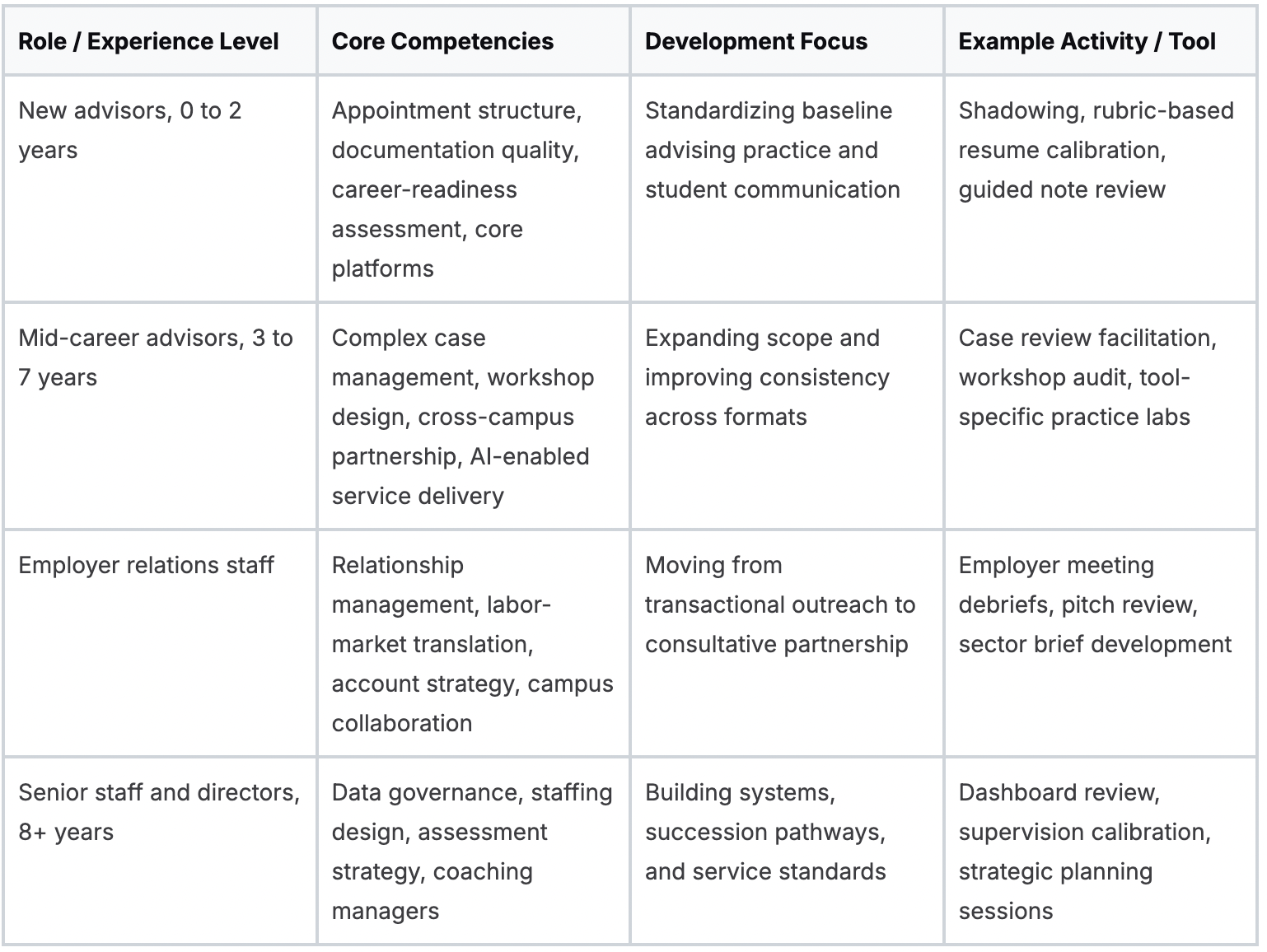

Staff development should be segmented by both role and experience level because advisors, employer-relations staff, and directors face different execution problems. A uniform calendar may look equitable, but it usually produces low relevance for everyone.

Best-in-class university career services centers employ an average of 14 full-time professional staff, with a median of 1,381 students served per professional, according to Inside Higher Ed’s benchmarking summary.

In larger operations, that scale makes one-size-fits-all PD especially wasteful. Clemson University’s Michelin Career Center is a good example of a team structure where segmentation becomes practical necessity rather than luxury.

What segmentation changes in practice?

New staff need more observation, guided practice, and correction than conference attendance. Mid-career staff often need stretch work that broadens responsibility across workshops, partnerships, technology use, and cohort-level programming.

Employer relations teams need development that helps them move from event logistics to strategic relationship management. Operations and assessment staff need training that strengthens the systems behind the student experience.

Many teams misallocate budget by sending everyone to the same external training, then wondering why service quality, internal promotion, or cross-team execution has not changed.

Equal access to development is important, but identical development is rarely the best use of time or money.

Our career center staffing model guide is a useful operational companion because staffing structure and PD design should inform each other.

If your staffing model includes specialized roles across advising, employer relations, operations, peer coaching, and assessment, your development architecture should reflect that specialization.

Segmentation does not fragment the team. It gives the team a shared service-quality framework with different learning paths based on role, experience level, and decision-making responsibility.

How to build a year-round professional development plan?

A year-round professional development plan should run as an operating cycle with skills assessment, role-based design, blended delivery, and quarterly review. That structure turns PD from an event calendar into a capability-building system tied to service priorities.

A strong model already exists in the field. According to NYIT’s best practices document on career services management, a structured PD methodology includes a thorough skills assessment, personalized learning paths, and blended training split 40% technical, 30% mentorship, and 30% rotation, with quarterly KPI measurement.

Institutions using that model saw 35% higher internal promotion rates and 78% of participants reporting higher job satisfaction.

A practical annual cycle

Q1 should diagnose actual performance gaps. Use supervisor observations, student feedback themes, workflow bottlenecks, and staff self-assessments. Avoid building the plan from conference trends alone.

Q2 should narrow the curriculum. Pick a small number of institutional priorities, then assign different learning paths by role. Common examples include AI-supported advising, employer-facing consultation, or outreach to underserved student populations.

Q3 is where teams usually drift. This is the point to move beyond workshops into practice: peer review, supervised application, short refreshers, and job rotation.

Q4 should be about evidence, not celebration. Compare what staff are doing now against the baseline. Keep what changed practice. Drop what only generated positive reactions.

Formats that sustain follow-through

A year-round plan works best when the delivery mix is varied:

- Technical learning: Platform use, analytics interpretation, documentation standards.

- Mentorship and coaching: Manager feedback, peer coaching, and developmental supervision.

- Rotation and exposure: Short-term work across employer relations, operations, assessment, or academic partnerships.

Some teams also need low-friction ways to document and share practice. For centers designing this more intentionally, our advisor development frameworks can serve as a planning reference for role-based capability mapping.

How to use peer observation, calibration, and case reviews

Peer observation, calibration, and case reviews improve service delivery when they are framed as practice improvement, not surveillance. The goal is to make quality standards visible, discuss edge cases openly, and reduce variation in how students, employers, and campus partners experience the career center.

University of Richmond is a useful institutional example because a personalized advising model depends on consistent judgment across staff.

Personalization without calibration can quickly become staff-to-staff drift, where students receive different guidance, different expectations, or different next steps depending on who they meet.

Peer observation that staff will actually accept

Keep peer observation non-evaluative. Use a short template focused on a few observable behaviors, such as agenda-setting, questioning quality, labor-market translation, documentation, student handoff, and closing the interaction with clear next steps.

A workable sequence looks like this:

- Choose one service format such as internship search advising, resume review, employer consultation, workshop delivery, or drop-in support.

- Observe with a shared lens rather than general impressions.

- Debrief within a day while details are still specific.

- Ask for one adjustment before the next observed session.

- Capture the pattern if the same issue appears across multiple staff members.

Observation should not be limited to one-on-one advising. Career centers can also observe and review employer meetings, workshop facilitation, student email responses, peer coaching sessions, and intake workflows.

The point is to improve the quality of the service system, not to critique individual style.

Calibration and case review routines

Resume calibration is one of the fastest ways to raise consistency. Put the same student document in front of multiple staff members, use a shared rubric, and compare the feedback each person would give. The conversation usually surfaces hidden differences in standards, tone, prioritization, and expectations.

The same idea can apply beyond resumes. Employer relations teams can review how they would respond to the same employer request. Workshop facilitators can compare how they would teach the same concept.

Student engagement staff can review how they would segment and message students for the same campaign.

Case reviews are better for ambiguous situations. Bring anonymized cases to team meetings, especially when a student has intersecting concerns related to academics, identity, confidence, immigration considerations, accessibility needs, financial pressure, or competing job-search strategies.

The point is not to produce one perfect answer. It is to build stronger shared reasoning.

Our peer mentor program resource is relevant here because peer structures only work when the office has clear coaching, escalation, and calibration habits.

Staff who are not aligned with each other will struggle to train student peer leaders consistently.

Use calibration when the issue is consistency. Use case review when the issue is judgment. Use observation when the issue is execution.

How to measure whether staff development is improving service delivery

Measure staff development by linking training to baseline service metrics, observed behavior change, and student-facing outputs. If the only evidence is that staff liked the session, the center still does not know whether service delivery improved.

A graphic depicting four interlocking gears representing the four levels of the Kirkpatrick training evaluation model.

According to Antoinette Oglethorpe’s guidance on measuring career development success, effective PD evaluation starts with baseline KPIs such as 65% staff satisfaction, then tracks impact at 6 to 12 months.

Programs with that level of tracking saw retention jump by 15%. The same verified guidance warns that generic programs often fail to show impact.

It also gives a career-services-relevant example: track resume quality scores before and after staff training on a platform.

A simple measurement stack

Use three levels of evidence together:

- Reaction and learning: Short feedback, knowledge checks, confidence ratings, and scenario-based assessments after the intervention.

- Behavior change: Peer observation results, rubric agreement, note quality, employer follow-up quality, workshop delivery changes, and adoption of shared workflows.

- Service outcomes: Faster turnaround, stronger document feedback, improved student use of tools, better workshop completion, higher employer follow-through, cleaner handoffs, and more consistent service across staff members.

Many teams under-measure. They track attendance and satisfaction, then stop. Directors need a narrower but more operational dashboard that shows whether professional development changed how the team works.

What to review every quarter

A useful quarterly review can include:

- Staff practice indicators: Observation themes, calibration drift, completion of role-based learning, use of shared templates, and manager coaching notes.

- Service delivery indicators: Quality of advising notes, workshop execution, employer follow-up consistency, student communication quality, campaign completion, and response times.

- Student-facing proxy outcomes: Resume quality improvements, mock interview completion, tool adoption patterns, workshop-to-appointment conversion, and improved engagement among priority student groups.

- Cross-team execution indicators: Handoff quality between advisors and employer relations, consistency of student referrals, accuracy of reporting fields, and alignment between programming, advising, and employer activity.

The institutional question is not whether staff enjoyed professional development. It is whether students, employers, and campus partners are receiving more consistent, higher-quality support because of it.

Wrapping Up

Professional development for career services staff works best when it functions as an ongoing service-quality system, not a disconnected set of trainings.

Career centers that build stronger staff capabilities through role-based development, calibration, operational consistency, and measurable performance are better positioned to scale support, improve student outcomes, and adapt to changing institutional demands.

For teams looking to strengthen both staff capability and student-facing infrastructure, technology can play an important supporting role.

Hiration help career centers extend their professional development strategy by combining career assessments, AI-powered resume optimization, interview simulation, and counselor workflow management within one FERPA and SOC 2-compliant ecosystem - giving staff stronger tools while reinforcing consistent, scalable service delivery across the entire student journey.