What should career center dashboards actually measure to prove institutional impact?

Career center dashboards should shift from activity metrics to outcome-based indicators that prove measurable change. This includes tracking student readiness gains through scored artifacts, employer pipeline quality through conversion rates, and operational efficiency through cost per outcome, all supported by integrated data across career systems, student records, and first-destination results.

Most career center dashboards are activity logs masquerading as impact reports.

They track appointments, event attendance, and job postings - metrics of busyness, not student transformation.

This creates a strategic blind spot, leaving leaders unable to answer the one question that matters to university stakeholders: "What changed for the student because of your intervention?"

The core failure is an over-reliance on vanity metrics that are easy to count but impossible to link to verifiable career readiness or institutional ROI.

This guide reframes the dashboard from a rear-view mirror of activity to a forward-looking compass focused on leading indicators of student success and employer pipeline health.

What metrics actually signal career center impact?

Your dashboard must prioritize leading indicators over lagging ones. Focus on three core areas: verifiable student readiness gains (e.g., pre/post resume score improvement), employer pipeline quality (e.g., applicant-to-interview-to-hire ratios), and operational efficiency (e.g., cost per positive student outcome).

These metrics shift the narrative from "we were busy" to "we created measurable change."

Moving beyond simple counts of advising sessions is critical. Instead, track the percentage of students who achieve a "proficient" score on a mock interview assessed against an institutional rubric.

According to a NACE, such evidence-based metrics are essential for demonstrating value.

Similarly, instead of just counting jobs posted on a platform like Handshake, measure the internship-to-full-time conversion rate with key employer partners.

This requires collaboration with Institutional Research (IR) but provides undeniable proof of pipeline value.

To verify these metrics, you need artifacts. A resume score improvement is verified by the before-and-after documents scored against a consistent rubric.

A high internship conversion rate is verified by first-destination survey data cross-referenced with employer-provided hiring numbers.

The goal is to ensure every key metric is backed by tangible evidence, not just self-reported data.

Also Read: How should career centers structure analytics to measure real student outcomes?

How should a strategic dashboard be structured?

Design separate, role-specific dashboard views for leadership, advisors, and academic partners. A one-size-fits-all layout leads to metric overload and low adoption. Each view should answer the user's primary questions at a glance, using a clear visual hierarchy that prioritizes strategic insights over raw operational data.

For university leadership (provosts, deans), the dashboard must tell a high-level story of institutional ROI.

Key visuals include longitudinal placement rates (3-5 year trend), overall NACE competency attainment across the graduating class, and a funnel tracking employer pipeline health from engagement to hire.

This view avoids granular details like individual appointment numbers.For advisors, the dashboard is a tactical case management tool.

It should surface at-risk students (e.g., low engagement, no approved resume by junior year) and track cohort progress against readiness milestones.

For example, Wake Forest University’s Office of Personal and Career Development (OPCD) uses data to show advisors the direct correlation between student participation in specific programs, like career treks, and their success in securing internships at those host companies.

This makes the data actionable for targeted student outreach.

Verification comes from advisor logs showing they acted on the dashboard's flags, followed by an increase in the flagged student's engagement metrics.

For academic partners, the dashboard should benchmark their department's students against university-wide averages for engagement, internship participation, and first-destination outcomes.

This comparative data, often visualized in a heat map or bar chart, provides context and sparks collaborative conversations about integrating career development into the curriculum.

What data sources and tools are required?

A truly insightful dashboard requires data integration beyond your career services management (CSM) platform. You must blend data from the CSM (e.g., Symplicity), the Student Information System (SIS), the Learning Management System (LMS), and alumni relations databases to get a holistic view of the student lifecycle.

Relying solely on a CSM provides a keyhole view, tracking activities but missing outcomes.

To answer strategic questions like whether early career engagement improves first-to-second-year retention, you must connect your CSM data with SIS retention data.

Research from EDUCAUSE identifies this lack of an integrated data architecture as a primary obstacle to student success initiatives.

For visualization, tools like Tableau or Power BI are industry standards for creating dynamic, multi-source dashboards.

For example, Purdue University’s Center for Career Opportunities (CCO) partners directly with their IR office to blend these disparate data sources.

This allows them to demonstrate how participation in their programs correlates with higher GPAs (from the SIS) and positive post-graduation outcomes (from first-destination surveys), proving their value to core institutional goals.

The verification is the multi-source data itself; the insights are only possible because the datasets have been credibly joined.

How do you interpret trends and avoid common pitfalls?

Interpret trends by focusing on correlations that inform strategy, while actively avoiding three common failure modes: metric overload, data silos, and low advisor adoption. The goal is to move from data reporting to data-driven decision-making, where every chart answers a specific strategic question and leads to a verifiable action.

Metric overload paralyzes decision-making. To avoid it, ruthlessly prioritize KPIs.

For every metric, ask: "Which strategic goal does this measure, and who will use it to make a decision?"

If it doesn't have a clear owner and purpose, it doesn't belong on the primary dashboard. This focus on "less but better" data is a core principle for any effective analytics dashboard.

Low advisor adoption occurs when dashboards are perceived as surveillance tools.

To prevent this, co-design the advisor view with your team.

George Mason University's career services team uses dashboards to identify low-engagement student populations, enabling advisors to design targeted outreach.

This frames the tool as a strategic ally for improving student service, not a performance tracker.

Verification of successful adoption is qualitative: listen for advisors citing dashboard trends in team meetings to justify new programming ideas.

What does an effective KPI dashboard framework look like?

An effective framework translates abstract competencies into measurable behaviors and verifiable evidence. This structure ensures every KPI on your dashboard is defensible and directly linked to student development. It moves beyond self-reported skills to institutionally-vetted proof of proficiency, which is essential for both internal assessment and external employer signaling.

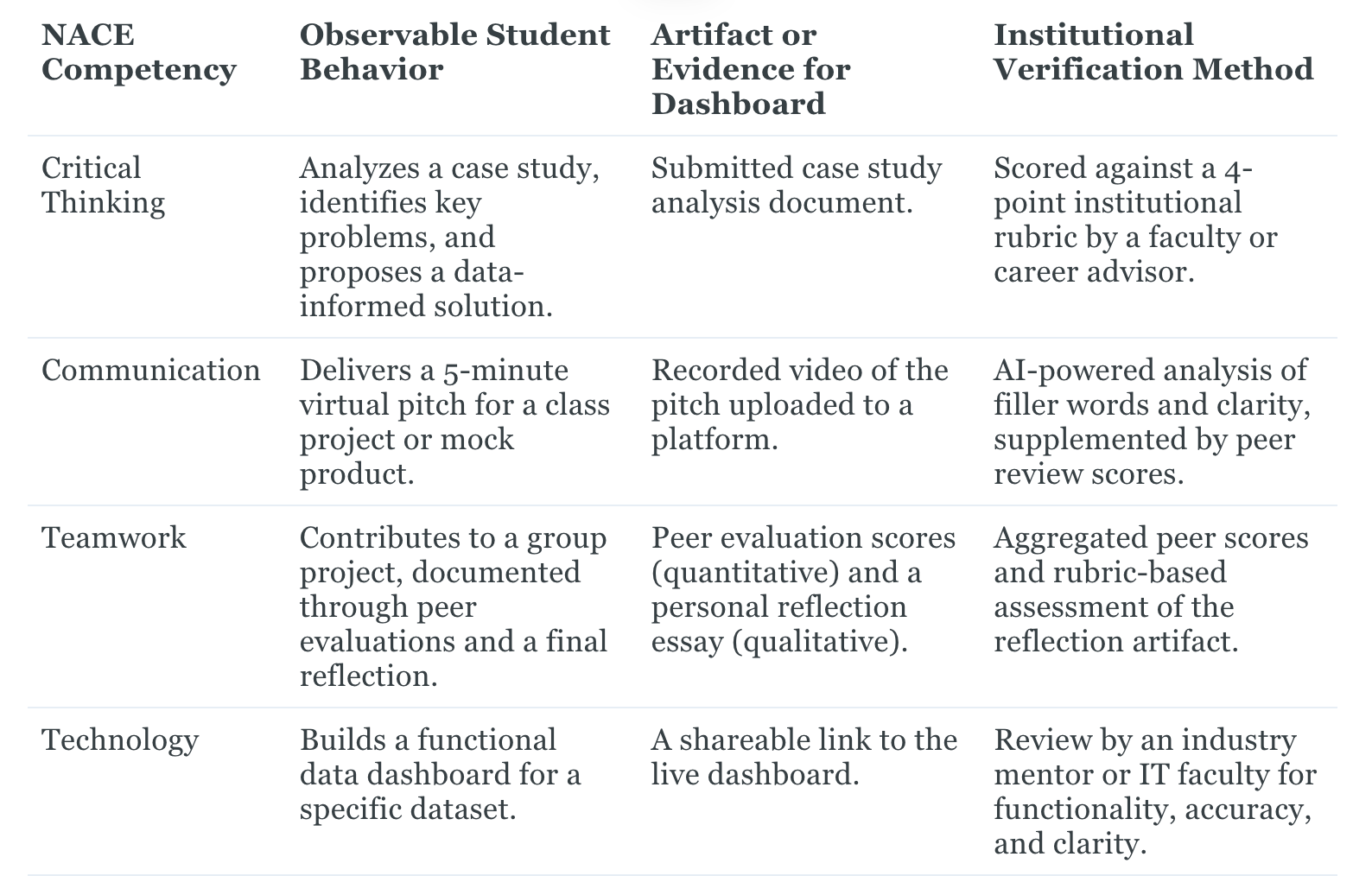

The following table connects abstract NACE competencies to concrete student behaviors and the institutional evidence required to verify them.

Inspired by the badging program at Clemson University, it provides a blueprint for building a dashboard grounded in tangible proof of student learning.

By mapping competencies this way, you create a clear and defensible methodology for every readiness KPI on your dashboard, ensuring each data point is backed by real, observable student work.

Also Read: Reporting Templates for Career Centers: Proving ROI to Leadership

Wrapping Up

Career centers are now being evaluated on outcomes, not activity.

The teams that stand out are the ones that can show clear evidence of student skill development, employer pipeline health, and institutional impact, backed by verifiable data, not just participation counts.

A well-designed dashboard becomes the operating system for how your center prioritizes time, allocates resources, and proves value to stakeholders.

This is exactly where the right infrastructure matters.

Hiration is built to support this evidence-first approach capturing student artifacts, scoring them against consistent rubrics, and generating the kinds of readiness data that can feed directly into your reporting and dashboards.

From resume optimization and interview simulation to cohort management and analytics, everything sits within one unified system designed for scale, security, and institutional reporting needs.

As you build or refine your dashboard, the goal is simple: every metric should tie back to a real student behavior, a verified artifact, and a measurable outcome.

When that alignment is in place, your dashboard stops being a record of activity and becomes a defensible proof of impact.

Career Center Dashboard Strategy — FAQs

Why are most career center dashboards ineffective?

Many dashboards focus on activity metrics like appointments and event attendance, which show effort but not outcomes. Without linking activity to skill gains, employment results, or retention, leadership cannot see institutional value.

What metrics actually prove career center impact?

High-impact dashboards track leading indicators such as resume score improvements, mock interview proficiency rates, internship-to-hire conversion, and cost per positive student outcome, all tied to verified student artifacts and first-destination data.

How should dashboards be structured for different stakeholders?

Leadership dashboards should show ROI, placement trends, and pipeline health. Advisor dashboards should highlight at-risk students and readiness milestones. Academic partner dashboards should compare departmental engagement and outcomes to institutional benchmarks.

What data sources are needed to build a strategic dashboard?

Effective dashboards require integrated data from career management systems, student information systems, learning platforms, alumni databases, and first-destination surveys to capture the full student lifecycle and outcomes.

How can career centers verify the accuracy of their dashboard metrics?

Each metric should be backed by verifiable evidence such as scored student artifacts, employer hiring data, advisor intervention logs, and first-destination outcomes, ensuring data reflects actual student progress rather than self-reported metrics.

What are the most common mistakes to avoid when building dashboards?

The most common pitfalls include tracking too many metrics, relying on siloed data, and creating dashboards advisors don’t use. Every KPI should map to a strategic goal and have a clear owner responsible for acting on it.

How do dashboards support better advising and student outcomes?

Dashboards help advisors identify low-engagement or at-risk students early, prioritize interventions, and track progress against readiness milestones, enabling targeted support that improves internship and employment outcomes.

What does a defensible KPI framework look like for career readiness?

A defensible framework maps competencies to observable student behaviors and verified evidence, ensuring each KPI is grounded in tangible student work and measurable development rather than perception or participation alone.