How can career centers design workshops that lead to measurable student outcomes?

Career centers can design outcome-driven workshops by focusing on observable student outputs rather than attendance alone. Effective workshops use structured formats, require artifact creation such as resume revisions or interview responses, combine human and AI-supported feedback, and track progress across participation, skill improvement, and follow-through actions to demonstrate real impact.

Career readiness workshops often look successful - strong attendance, smooth delivery, positive feedback. Yet most centers struggle to prove what actually changed for students afterward.

Without clear evidence like improved resumes, stronger interview performance, or follow-through actions, workshops risk becoming high-effort activities with limited impact.

Career centers are under growing pressure to show outcomes tied to student success, retention, and post-graduation results, not just participation.

When workshops can’t demonstrate measurable improvement, it becomes harder to justify resources, scale programs, or defend budget decisions.

This guide outlines how to design, deliver, and measure workshops that produce observable results - covering formats, activity design, facilitation models, attendance strategies, and outcome-focused measurement.

What workshop formats suit different scales and modalities?

The right format depends less on student preference than on delivery constraints and follow-through risk. Career readiness workshops work best when the format matches advising capacity, student schedules, and the kind of evidence you need to capture, whether that’s a revised resume, a mock interview score, or a completed action plan.

When each format is operationally useful

One-day intensives fit institutions that need fast delivery before recruiting peaks. They’re easier to market, but they often compress reflection, practice, and feedback into a single advising event.

Students leave with momentum, yet staff still need a follow-up mechanism or the workshop becomes a standalone intervention.

Multi-session cohorts are stronger when the goal is behavior change. Resume drafting, interview rehearsal, and employer research improve when students return, revise, and compare progress over time. The trade-off is staffing continuity. If the same counselor can’t stay with the group, quality becomes uneven.

Virtual webinars expand reach and lower room logistics, especially for commuter, online, and graduate populations. Their weakness is observational assessment. It’s harder to tell whether students can perform a task or are only attending passively.

Hybrid models are the most demanding to set up and often the most resilient once established. They combine synchronous instruction with asynchronous practice, which is where AI-enabled tools can matter. The under-discussed advantage is not novelty. It’s advisor time allocation.

Routine tasks such as resume review triage, mock interview repetition, and progress tracking can move outside live workshop time, leaving staff to focus on exceptions and coaching.

The tech angle is still thin in public workshop literature.

One useful signal comes from an ACT-linked discussion of AI-hybrid delivery, which notes that integration remains understudied.

That doesn’t mean every center should rush to automate. It means hybrid design deserves evaluation as a staffing strategy, especially when institutions are already expanding virtual career treks and other remote employer-facing programming.

Practical rule: Choose the format by asking where your staff hours disappear. If advisors are spending them on repetitive feedback, hybrid models deserve serious attention.

Also Read: Workshop Scripts Advisors Can Use to Create Verifiable Student Outcomes

How do you design engaging workshop activities for diverse learner needs?

Engaging activities are the ones that produce observable evidence, not just participation. In career readiness workshops, that means every exercise should end with an artifact, a scored performance, or a documented revision that an advisor can review later.

A practical sequence works well across diverse student groups:

- Start with a diagnostic task. Use a resume critique, interview prompt, or job-description matching exercise before any instruction. Students engage faster when they can see the gap in their own materials.

- Shift to structured peer work. Peer review is useful when the rubric is narrow. Ask students to identify evidence of role fit, not to give vague feedback on style.

- Require a visible revision. The activity isn’t complete when discussion ends. It’s complete when the student rewrites a bullet, records a response, or maps experience to a target role.

- Close with a transfer prompt. Students should name the next setting where they’ll use the skill, such as a fair, internship application, or advising appointment.

Mentorship can strengthen this design when workshops risk becoming one-off events.

According to Mentor Collective’s 2025 career readiness white paper, mentorship-enhanced workshops produced a 45% increase in workshop attendance and 13% higher interview practice.

That finding matters because it points to a design principle many centers underuse. Activities are more durable when another person expects follow-through.

What to build into the activity itself

Accessibility and evidence should sit inside the same workflow. Use captioned prompts, screen-reader-friendly handouts, typed and spoken participation options, and rubrics that describe performance in plain language.

For live sessions, short structured openers from these career coaching icebreakers can surface confidence gaps without spending half the workshop on introductions.

At UNC Charlotte, document-based career learning often works because students revise against specific employer expectations.

Similarly. at the University of Oregon, peer interaction fits workshop settings where reflective critique is part of the learning design.

Activities should answer one advisor question clearly: “What did the student produce that shows readiness improved?”

Also Read: How can career centers align their annual program calendar with hiring cycles to improve student outcomes?

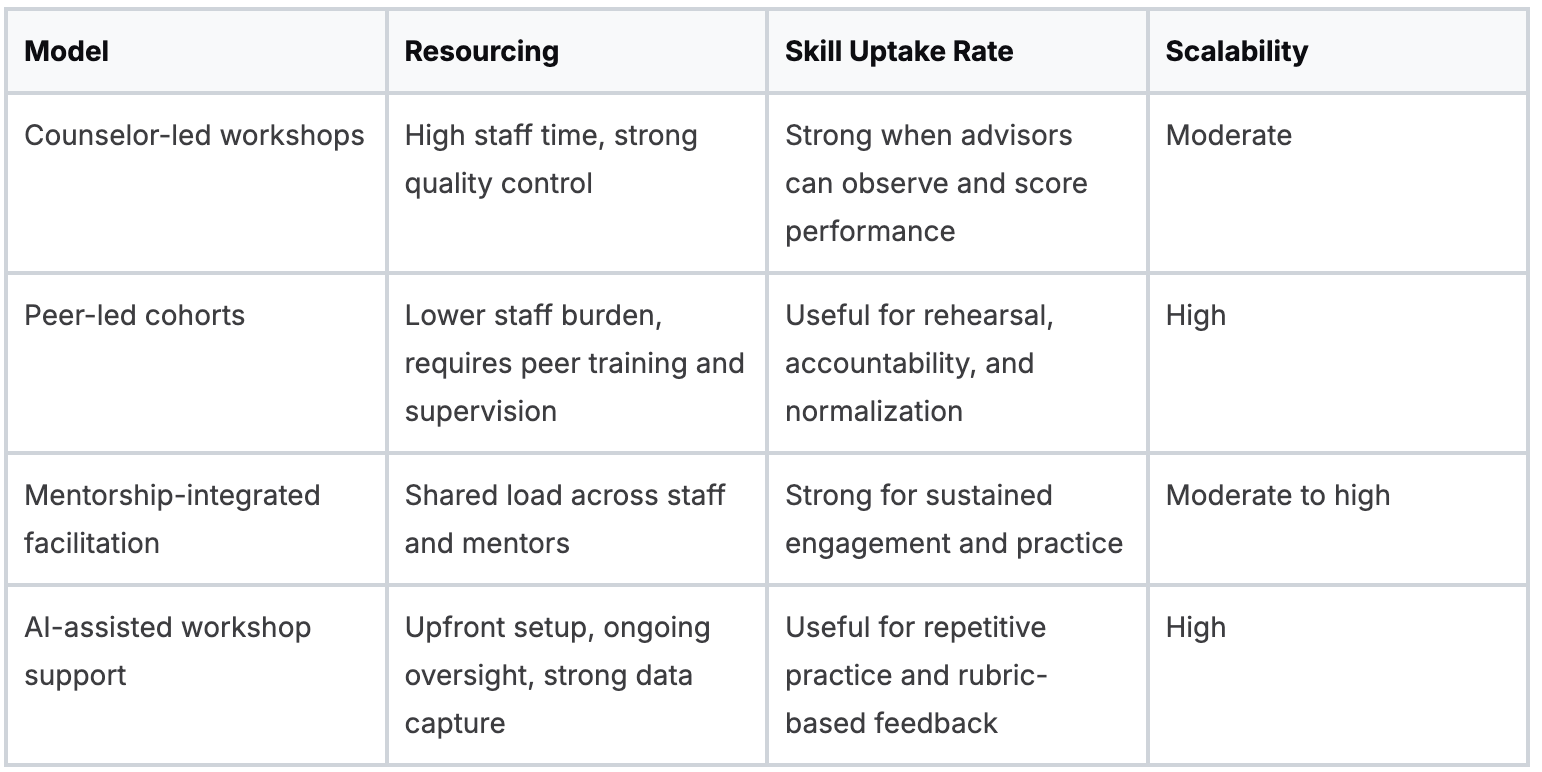

What facilitation models maximize skill uptake in career readiness workshops?

The strongest facilitation model is the one that matches instructional depth with staff reality. If your workshop asks students to demonstrate a skill, the facilitator model has to support feedback quality, repetition, and escalation when students stall.

One of the clearest comparative signals comes from Cincinnati State Technical and Community College.

In the ACT WorkKeys evaluation, 90% of students in foundational and soft skills workshops earned the NCRC, compared with 67% without ACT services.

That result suggests structured facilitation matters most when workshops are tied to assessment and support services rather than offered as isolated events.

Where each model breaks down

Counselor-led delivery gives the best signal when students need nuanced feedback, especially in interviewing, narrative framing, and employer-specific coaching. The risk is capacity.

Quality is hard to preserve once demand outgrows counselor hours.

Peer-led cohorts work well for accountability and repetition. They’re weaker when students need corrective feedback on technical content.

A peer can spot a vague bullet. A trained advisor is better at identifying why the bullet won’t survive recruiter review.

AI-assisted support can improve throughput if the center defines clear escalation rules. A platform can score, sort, and prompt.

It can’t replace judgment on sensitive cases, identity-related concerns, or discipline-specific labor market context. That’s why most centers should think in terms of blended facilitation, not substitution.

For teams comparing delivery structures, these coaching models for career centers are useful to map workshop facilitation back to staffing and student complexity.

At Cincinnati State Technical and Community College, the signal came from tightly linked assessment, training, and certification. And at Purdue University, facilitation design tends to succeed when workshop goals align with repeatable student workflows rather than one-time event attendance.

Also Read: How can career centers use a structured coaching session agenda to scale advising and improve student outcomes?

How can career services boost attendance for career readiness workshops?

Attendance rises when workshops are embedded in a guided pathway, not posted as isolated events. Students are more likely to show up when the invitation includes a clear use case, a personal nudge, and a next step that connects to advising, mentoring, or employer activity.

The strongest evidence in the material provided points to structured outreach. ERIC reports that mentorship-integrated interventions produced a 45% increase in career workshop participation.

That’s a useful reminder that promotion and program design can’t be separated. Attendance improves when someone is accountable for bringing students back.

Attendance drivers that hold up operationally

- Faculty referral loops work when faculty can send students into a clearly timed workshop tied to an assignment or milestone.

- Cohort enrollment reduces no-shows because students see the workshop as a sequence, not an optional drop-in.

- Segmented reminders matter more than broad promotion. Students respond when the message reflects class year, program, or recruiting stage.

- Friction-free registration is often overlooked. Tools such as class booking systems can reduce the administrative drag that keeps students from committing.

If your turnout problem is concentrated among students who rarely opt in, these strategies to engage low-participation students are more relevant than another generic marketing push.

Low attendance is often a routing problem. Students don’t ignore workshops because the topic lacks value. They ignore workshops that arrive detached from timing, trust, and consequence.

Also Read: Teaching Professionalism to Students: A Career Center Framework

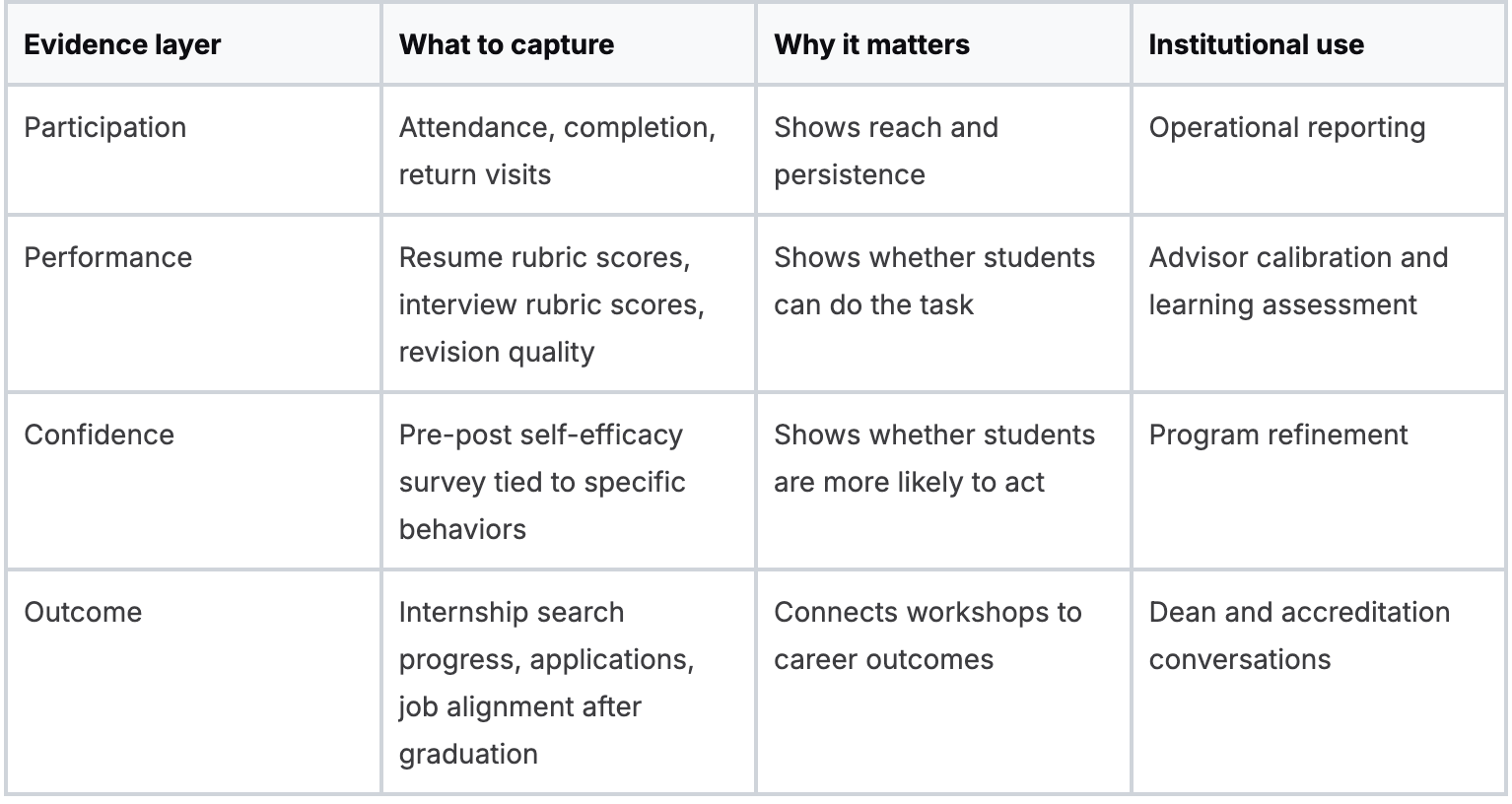

How should career centers measure the impact of career readiness workshops?

A useful measurement system starts with a simple fact. Workshop attendance is easy to count, but attendance says little about whether students improved a resume, practiced an interview answer well enough to score higher on a rubric, or took the next career action after the session. Career centers that report only headcount usually satisfy operational reporting, not budget, accreditation, or outcomes scrutiny.

Career centers should measure workshop impact through a stacked evidence model: participation, skill demonstration, confidence shift, and downstream outcome.

That structure matters because workshops sit in the middle of a longer student journey. They rarely cause placement outcomes on their own, yet they can produce observable changes in behaviors and artifacts that predict stronger progress later.

The strongest models connect these layers instead of treating them as separate dashboards.

If students attend a resume workshop, improve against a rubric, report higher confidence in tailoring documents, and submit more applications within the next month, the center can make a stronger causal argument than it could from attendance totals alone.

That is also where AI-supported systems change the economics of measurement.

Manual artifact review gives high-quality evidence, but it consumes staff time quickly, especially at large institutions or in high-volume workshop series.

AI-powered platforms can score draft quality, track revision history, flag common weaknesses, and surface cohort patterns before advisors review the work.

Traditional workshops still matter because students need feedback, context, and practice with peers. The hidden cost in many workshop programs is not facilitation.

It is the labor required to capture comparable evidence after the session. For teams building that case internally, this guide to showing career center ROI and impact is a useful reference.

What to avoid

Avoid tying workshop value too tightly to placement outcomes. Placement reflects employer demand, academic program mix, geography, and labor-market timing as much as student preparation.

A better approach is to report contribution. Did the workshop improve application quality, interview readiness, or search persistence in ways the institution can document?

Avoid treating self-reported confidence as proof of learning. Confidence measures are useful when they are specific, pre-post, and tied to observable behaviors. They are weak evidence when they stand alone.

Avoid inconsistent scoring. At Northeastern University, a multi-channel student journey makes shared data definitions more important than isolated success stories.

Similarly, at Arizona State University, scale makes standardization more valuable than anecdotal feedback because comparable scoring is what allows patterns to surface across large student populations.

A good workshop measurement model answers three questions clearly: who participated, what changed in student performance, and which outcomes followed.

That is the level of evidence a provost can use, and it is the level of evidence that helps career centers decide where AI support lowers cost, where human facilitation raises learning quality, and which workshop formats deserve to expand.

Also Read: How can career centers map career readiness across the student lifecycle?

Wrapping Up

Workshops will continue to play a central role in how career centers deliver guidance at scale.

The difference is no longer in how many sessions you run, but in how clearly those sessions translate into improved student performance, stronger artifacts, and measurable outcomes.

As teams rethink formats, facilitation, and measurement, the underlying challenge becomes operational: how to deliver consistent feedback, track progress across cohorts, and generate comparable evidence without overwhelming advisor capacity.

That’s where the right systems can quietly change the equation.

Hiration brings assessments, resume optimization, interview simulation, and cohort-level analytics into a single workflow, allowing routine feedback and tracking to happen outside live sessions while counselors focus on higher-impact coaching.

The goal is to help make workshops more effective, measurable, and scalable over time.

Career Readiness Workshops — FAQs

Why do many career workshops fail to show real impact?

Many workshops focus on attendance and satisfaction rather than measurable changes in student behavior, such as improved resumes, stronger interview responses, or follow-through actions.

What is the most important principle in workshop design?

The most important principle is requiring observable outputs. Every activity should result in an artifact, performance, or documented revision that demonstrates student progress.

Which workshop formats work best for different goals?

One-day intensives work for quick delivery, multi-session cohorts support behavior change, webinars expand access, and hybrid models balance scale with structured practice and feedback.

How should workshop activities be structured?

Activities should include a diagnostic task, structured peer work, a required revision or output, and a transfer step that connects the skill to real-world application.

What facilitation model works best for skill development?

Blended facilitation works best, combining counselor expertise, peer support, and AI-assisted feedback to balance quality, scalability, and efficiency.

How can career centers increase workshop attendance?

Attendance improves when workshops are embedded into structured pathways, supported by faculty referrals, cohort enrollment, targeted messaging, and low-friction registration.

What metrics should be used to measure workshop success?

Career centers should track participation, skill improvement, confidence shifts, and downstream actions such as applications or interview attempts to demonstrate impact.

Why is attendance alone not enough as a metric?

Attendance shows reach but not effectiveness. Without evidence of improved performance or behavior, it does not demonstrate whether students actually benefited from the workshop.

How can career centers improve workshop measurement at scale?

Using structured rubrics, standardized outputs, and AI-supported tracking can help capture consistent evidence of student progress without overwhelming staff capacity.

What is the biggest mistake to avoid in workshop design?

The biggest mistake is treating workshops as one-time events rather than part of a broader system that includes preparation, practice, feedback, and follow-up actions.