Career centers usually get the same advice about alumni mentoring. Start small, recruit enthusiastic alumni, and pair people who seem like a good fit.

That advice is incomplete. Programs rarely struggle because mentoring is a weak idea. They struggle because institutions treat them like a side service instead of shared infrastructure.

For career services leaders, that distinction matters. A lightly managed pilot can create goodwill.

A durable program can support career readiness, alumni engagement, and cross-campus coordination in ways senior leadership will keep funding.

This alumni mentorship program guide for career centers focuses on the operating model behind that durability: program structure, matching decisions, mentoring formats, compliance, and measurement.

How Should Career Centers Architect a Program for Scale

A scalable alumni mentorship program starts with institutional alignment, not mentor recruitment. If the program is framed only as a student service, it will compete for scarce staff time. If it is framed as shared campus infrastructure, multiple offices have a reason to sustain it.

A strong starting point is to define the program in three layers. The institutional layer answers why the university should care.

The program layer defines delivery and accountability. The student layer specifies what changes in behavior, confidence, and preparedness.

A commonly missed step is stakeholder mapping. Career services may own the student experience, but Advancement owns alumni relationships, Academic Affairs influences incentives, and Enrollment leaders care about visible student support.

If they are involved late, programs stall on outreach rules, branding, data access, or staffing.

What should the strategic foundation include

Use a short operating charter, not a long concept note. The charter should name:

- Institutional outcomes: Alumni engagement, student career preparation, and stronger cross-office collaboration.

- Program boundaries: Who can participate, what the mentoring period looks like, and who owns escalation.

- Decision rights: Who approves mentor outreach, manages student communications, and reviews risk issues.

Evidence plan: Which outcomes will be measured and who receives reports.

Iona University offers a useful model through its Gaels Go Further approach, described in the Together Platform overview of alumni mentorship programs.

The example shows senior-level sponsorship and cross-functional implementation rather than a stand-alone career center experiment.

The same source cites a study where 70% of students reported increased confidence in securing employment, 80% felt better prepared for interviews, and 95% were willing to mentor in the future.

These signals help reposition mentoring from a feel-good initiative to an institutional asset.

Practical rule: If your proposal cannot explain why Advancement, Academic Affairs, and student-facing leadership each benefit, the program is still too narrow.

How do you keep a pilot from becoming pilot purgatory

Most pilot programs fail in one of two ways. Some are too ambitious for existing staff capacity. Others are so informal that they generate stories but not evidence.

A better structure is a bounded pilot with enterprise logic. Keep the first cohort manageable, but build the same governance, consent process, reporting cadence, and escalation workflow you would need later at larger scale.

Many centers also need to think in systems rather than events.

The operating discipline described in our guide on scalable systems for career services applies directly here. The program should reduce ad hoc work over time, not create another manual process that depends on one staff member’s memory.

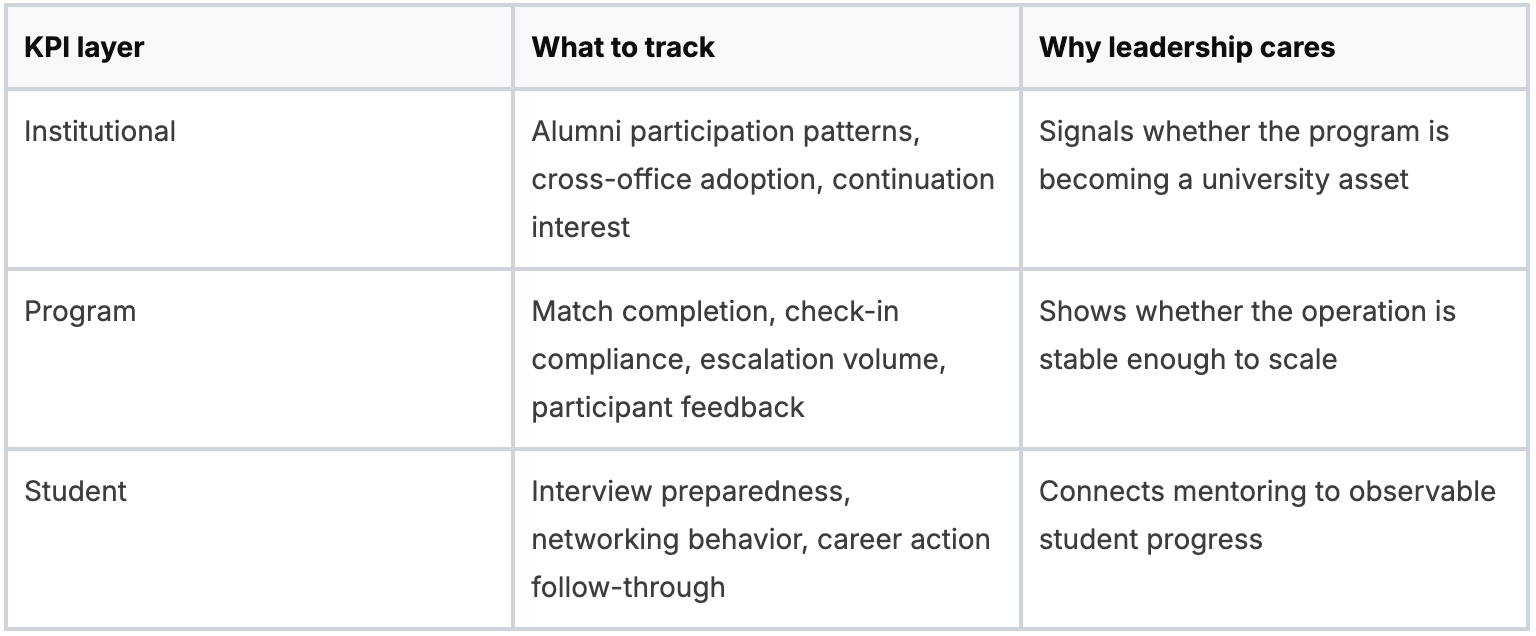

Which KPIs matter at each level

A single dashboard metric will not protect the program in budget conversations. Use a tiered framework.

The point is not to over-measure. It is to prevent the common problem where a program is celebrated anecdotally but cannot justify staffing, technology, or shared ownership.

Where do real trade-offs show up

The main trade-off is control versus coalition. A career center can launch faster by keeping ownership tight. It can scale further by sharing ownership, but only if governance is explicit.

Shared ownership without explicit rules creates duplicate communications, mentor fatigue, and inconsistent expectations.

Another trade-off is prestige versus access. Many institutions want their first cohort to feature highly visible alumni.

That can help launch attention. It can also narrow the mentor pool and make the program overly dependent on a few people.

In practice, reliable alumni with current industry experience and realistic availability often produce a better student experience than marquee names with limited time.

Also Read: How Career Centers Can Map Career Readiness Across Student Lifecycle

What Are the Trade-offs Between Mentorship Matching Models

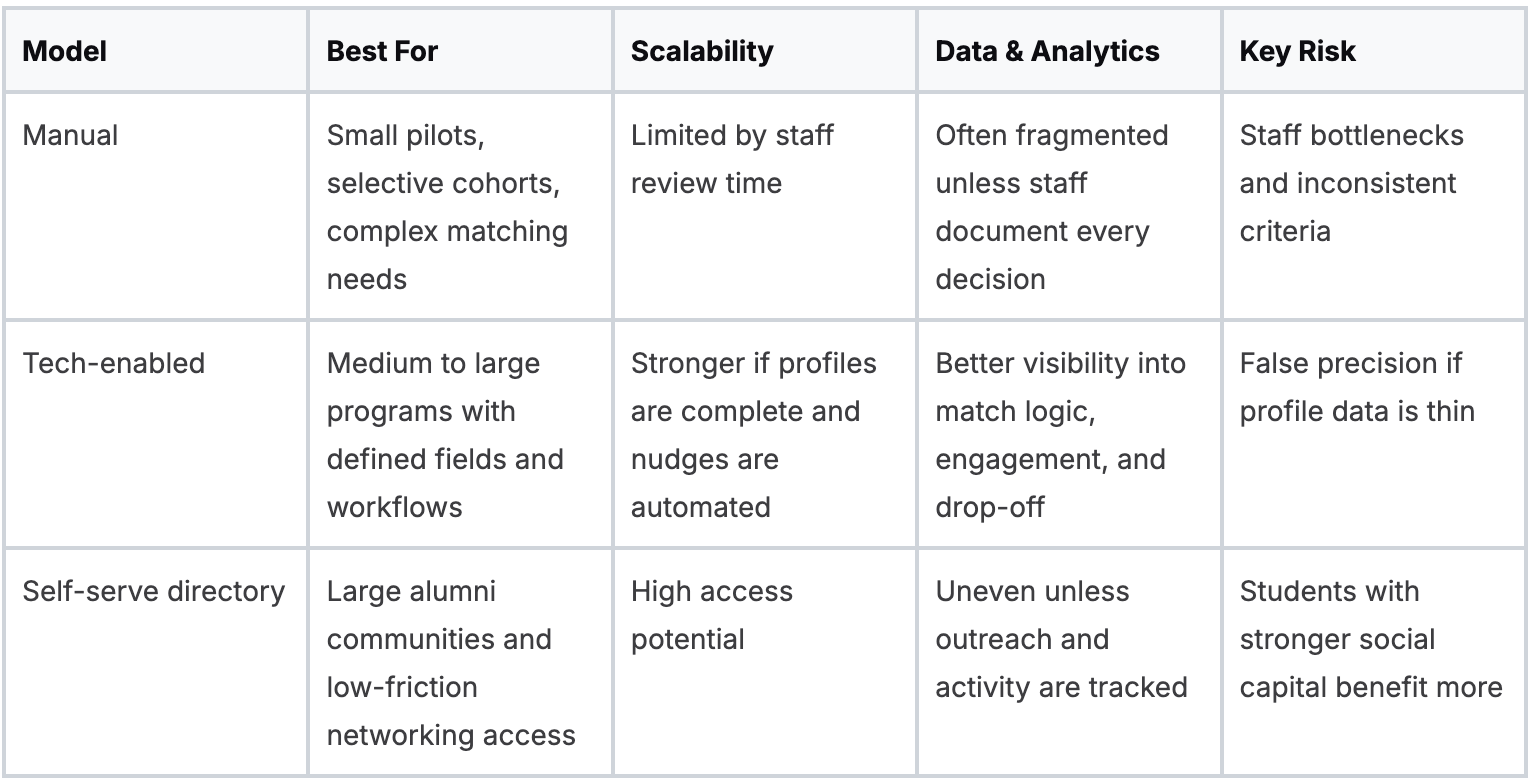

The right matching model depends on staff capacity, data quality, and the level of control your institution needs. Manual matching can produce strong pairings. It also creates bottlenecks. Self-serve directories expand access. They can widen equity gaps. Tech-enabled matching sits in the middle, but only if the underlying data is usable.

Experienced CSPs know that matching is never just a logistics task.

It is where your advising philosophy becomes operational. Are you optimizing for relationship quality, broad access, speed, identity-based affinity, industry alignment, or measurable outcomes?

You cannot maximize all of those at once.

Which matching models are most common

Most career centers end up choosing among three models:

- High-touch manual matching: Staff review profiles and assign pairs.

- Tech-enabled matching: Software recommends or ranks matches using profile data and stated preferences.

- Self-serve directories: Students browse alumni profiles and initiate contact.

Georgetown University’s Hoya Gateway is a useful named example of a platform-based alumni engagement model.

The lesson from institutions like Georgetown is less about software branding and more about the operating implication. Once the network grows, discovery, communication norms, and data capture need structure.

How do the models compare in practice

Also Read: 8 Plug-and-Play Career Assignments to Embed in Any Course

What tends to fail with manual matching

Manual matching works best when staff know both populations well and the cohort is intentionally small. It fails when the institution assumes that “personalized” always means “better.”

Without a documented intake framework, staff often default to obvious variables such as major, industry, or geography.

Those variables matter, but they are often poor predictors of whether a conversation will be useful.

A first-generation student exploring consulting may need a mentor who can explain hiring norms and hidden expectations, not a graduate with only the same major.

A more disciplined intake process helps. Career centers evaluating matching criteria often benefit from a structured question design like the one outlined in this intake questionnaire framework for career centers.

The important point is to capture need-state, not just identity markers.

When does tech-enabled matching earn its keep

Tech-enabled matching becomes valuable when the program is large enough that staff judgment alone cannot keep pace. It is most effective when the platform can use richer inputs than static profile fields.

For example, a center may want to match students based on stated career questions, job-search stage, confidence with networking, or documented skill gaps from existing career workflows.

If resume review data, interview preparation data, or advising intake data already exists elsewhere, those signals can improve pair quality when the institution has permission to use them and the integration is governed properly.

One option in that category is Hiration, which can connect student career-readiness activity with counselor workflows and analytics.

In a mentoring context, the practical value is not “AI matching” as a slogan. The practical value is using verified student workflow data to support better triage, nudges, and reporting.

Operational test: If staff cannot explain why a suggested match was made, the model is too opaque for a student-facing program.

Why do self-serve directories create uneven outcomes

Self-serve models lower administrative burden and can activate larger alumni pools quickly. They also assume students know how to assess profiles, write outreach messages, and recover from non-response.

That assumption is risky. The students most likely to benefit from alumni access are often the least likely to use an open directory confidently without preparation.

A self-serve model can favor students who already understand professional norms.

That does not make directories a poor choice. It means they work better when paired with guardrails such as:

- Outreach templates: Students need examples of credible first-contact messages.

- Response expectations: Alumni should know what turnaround and boundary norms look like.

- Advisor intervention rules: Staff should know when to step in after silence or poor fit.

The strategic question is simple. Do you want the program to function as a networking marketplace or as an institutionally managed mentoring experience?

Many centers try to run both from the same design, and that is where confusion begins.

How Do You Design Effective Mentoring Formats and Training

Effective mentoring formats create structure without scripting every conversation. If the format is too loose, meetings fade out. If it is too rigid, alumni disengage. The strongest programs define a clear container, a few required milestones, and role-specific training materials that remove ambiguity.

This matters more now because structured mentoring is increasingly expected by students.

According to 2026 mentoring analyses summarized by Mentorloop, 88% of students and young professionals prioritize on-the-job practical experience and 76% view continuous learning through mentors as essential.

The implication for career centers is straightforward. A vague “connect with an alum” offer no longer meets the bar.

Which mentoring formats work best

No single format fits every institutional goal. A practical portfolio often includes:

- One-to-one mentoring: Best for sustained accountability and individualized conversation.

- Group mentoring: Useful when mentor supply is limited or the topic is field-specific.

- Flash mentoring: Short, focused interactions for targeted questions or exploratory access.

One-to-one is usually the flagship format, but it should not be the only format.

Group models can reduce pressure on alumni and increase access for students in emerging fields where mentor supply is thin.

Flash mentoring helps institutions engage alumni who will not commit to a full cycle but are willing to contribute in short bursts.

What should training contain

Training should answer role confusion before it appears. Generic orientation sessions tend to underperform because they focus on enthusiasm rather than boundaries and behaviors.

A solid mentor handbook covers:

- Role definition Mentors are guides, not recruiters, therapists, or faculty proxies.

- Conversation scope Career exploration, networking norms, resume feedback, workplace context, and interview preparation are appropriate. Employment guarantees are not.

- Escalation rules Staff need a path for concerns involving student distress, inappropriate requests, or repeated non-response.

- Time expectations Alumni need a realistic sense of cadence and duration.

- Examples of useful meetings The first meeting, a networking strategy session, and a reflection meeting near the end.

The University of Toronto’s Statistical Sciences alumni mentor guidebook is a useful real-world reference point because it treats mentoring as a guided practice, not a casual exchange.

That is the level of operational clarity most programs need.

Tip: If your mentor orientation can be delivered without mentioning boundaries, referral protocols, or meeting agendas, it is still an inspiration session, not training.

How should the first meeting be structured

The first meeting is usually where momentum is won or lost. Many pairs spend too much time on biography and too little time on expectations.

A better first-meeting guide includes four moves:

- Clarify purpose: Why were we matched, and what do we want from this cycle?

- Define communication norms: Email, platform messaging, response windows, rescheduling.

- Choose a first concrete topic: Resume review, industry mapping, networking practice, or interview prep.

- Set the next meeting before ending the call: Programs lose traction when the second meeting remains undefined.

For many students, one of the most concrete discussion topics is interview performance.

Also Read: 5 Reasons Career Courses Should Be Mandatory (& How to Do It)

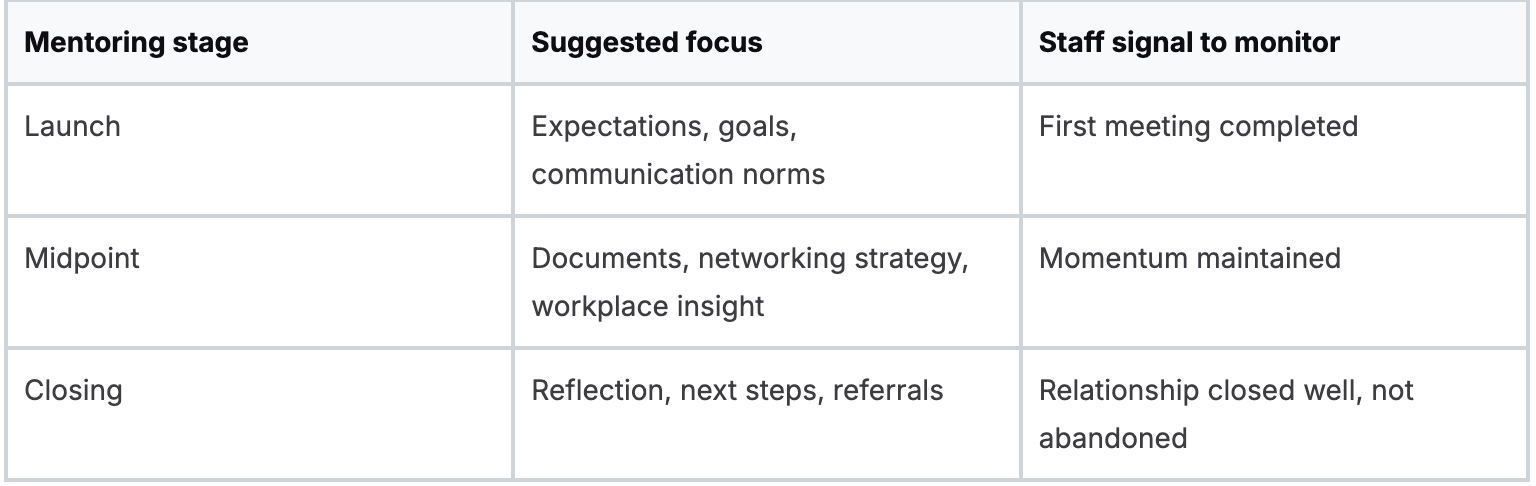

How do you prevent conversations from fizzling out

The simplest prevention tool is a milestone-based structure. Do not ask pairs to “meet regularly” and hope for the best. Give them a sequence.

Centers refining these engagement formats often adapt ideas from broader coaching models used in career centers.

Mentoring and coaching are not identical, but they share a need for consistent prompts, defined roles, and repeatable session design.

Another common failure point is treating all students as equally prepared for mentoring.

Some need explicit training on how to ask for feedback, how to follow up after a meeting, and how to turn advice into action.

Student handbooks should therefore be separate from mentor handbooks. Combining them usually dilutes both.

What Are the Critical Compliance and Tech Integration Guardrails

The core compliance issue is simple. Alumni mentors are third parties relative to student records. If your program shares student information casually, the institution takes on avoidable risk. Compliance should be built into the program design before matching begins, not added after launch.

For US institutions, FERPA is the immediate concern. For institutions working across borders or with international participants, GDPR may also matter. The operational question is not whether your campus has a privacy policy. It is whether the mentoring workflow enforces it.

What student data should be shared

Share the minimum necessary information for a successful match and informed conversation. That usually means profile elements the student has knowingly provided for mentoring purposes, such as interests, goals, and preferred industries.

Avoid informal exports of advising notes, academic records, or sensitive personal context unless your institution has explicitly approved that use and the student has consented appropriately.

Career services teams often underestimate how much “helpful context” becomes risky once it leaves the internal advising environment.

Which guardrails reduce institutional exposure

Several controls do more work than long policy documents:

- Consent at intake: Students should affirm what information can be shared and with whom.

- Mentor code of conduct: Alumni should acknowledge privacy expectations and communication boundaries.

- Platform-based messaging when possible: Centralized communication improves oversight and recordkeeping.

- Escalation routing: Concerns should go back to staff, not circulate informally among volunteers.

Many centers already use student management tools elsewhere in their operation. Reviewing how platforms structure student records can be useful when defining fields and permissions for mentoring.

For example, the student-profile workflow shown in Tutorbase CRM software illustrates the broader principle that not every user should see every field.

Key takeaway: Data minimization is a service design choice, not just a legal one. The less unnecessary context you circulate, the easier the program is to govern.

Also Read: What guardrails do career centers need for AI use in student job preparation?

How should mentoring tools fit into the campus stack

A mentoring platform should not become another disconnected silo. If students need a separate login, staff have to reconcile duplicate records, or outcomes live outside existing reporting, the program will feel heavier than it should.

The practical priorities are:

- Single sign-on: Reduces friction for students and alumni.

- System mapping: Decide what lives in the student information system, what lives in the career system, and what belongs only in the mentoring platform.

- Unified identifiers: Match records across systems without manual reconciliation.

- Reporting exportability: Institutional research, advancement, and career leadership all need different cuts of the same data.

Career centers evaluating vendors should use a due-diligence process that looks beyond feature demos.

This technology due diligence framework for career centers is useful for pressure-testing integration, permissions, and implementation assumptions before procurement.

What matters most is governance. Tools can support compliance. They do not create it on their own.

How Should Career Centers Measure and Improve the Program

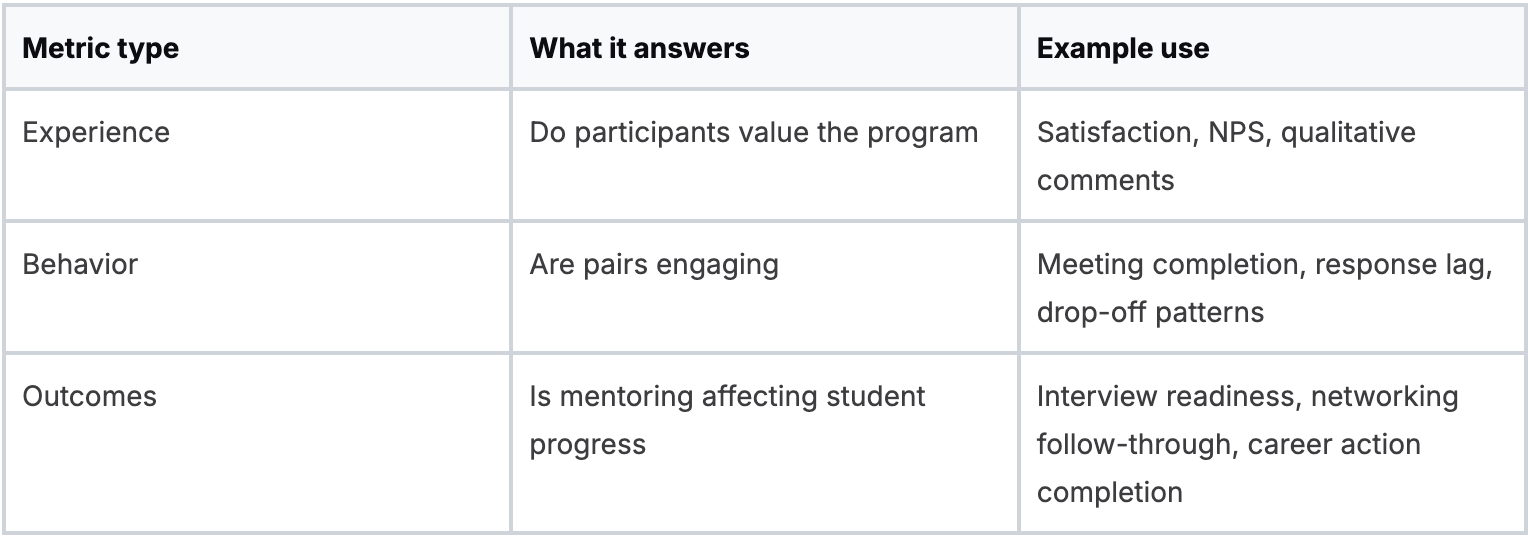

Measurement should tell you which relationships are healthy, which design choices are underperforming, and which outcomes leadership will recognize as worth continued investment. If the only evidence you collect is end-of-program satisfaction, you will miss the operational failures early enough to fix them.

According to Chronus program benchmarks, well-structured programs see participant retention rates of 85%, mismatched pairs can lead to a 35% drop-off, and a useful target is an NPS above 70 from both mentors and mentees.

Those figures are useful because they connect design quality to measurable operational health.

Which metrics should sit on the dashboard

A workable dashboard uses three categories.

The key is sequence. Behavior data should trigger intervention before outcome data disappoints. If pairs are slow to schedule, missing check-ins, or abandoning platform messaging, staff can act.

If you wait for end-of-cycle reflection forms, the student experience is already lost.

How do you identify weak points early

Most programs have visible and hidden failure modes. Visible problems include unmatched students or mentor complaints. Hidden problems include low-value meetings, students who stop responding after one call, and alumni who stay enrolled but disengage.

Set up a review rhythm around a few practical questions:

- Are first meetings happening on time

- Which pairs have stalled between meetings one and two

- Which mentors have multiple students needing intervention

- Which student groups are participating less fully than others

Counselor dashboards are important here. Systems that combine engagement signals with broader career-readiness workflows can help staff spot at-risk pairings faster and produce cleaner reporting.

Career centers building that reporting layer often start with a framework like this one on career center metrics, then adapt it to mentoring-specific use cases.

What should you report to stakeholders

Different stakeholders need different summaries. A dean may care about student participation patterns and academic alignment.

Advancement may care about alumni engagement quality. Career services leadership may care about intervention load and student follow-through.

A single stakeholder memo should usually include:

- Program health: Are matches active, stable, and completing the cycle?

- User sentiment: Are mentors and mentees willing to participate again?

- Student progress signals: What career actions are students taking after mentoring?

- Operational recommendations: What needs to change in matching, training, or staffing?

Reporting advice: Separate “program ran” metrics from “program mattered” metrics. Institutions often collect the first category and assume it proves the second.

How do you create a real improvement loop

Continuous improvement requires more than collecting more feedback. It requires deciding which design element each metric can change.

If drop-off clusters around early meetings, adjust orientation and first-meeting guides. If students like the experience but outcomes are vague, tighten goal-setting and milestone prompts.

If certain majors or populations engage less, revisit recruitment, communication, and matching assumptions.

A good improvement loop has five moves:

- Define the intended behavior

- Track whether it happened

- Locate where the process broke

- Change one design element

- Re-measure in the next cycle

That discipline is what turns an alumni mentorship program from a calendar item into an institutional capability.

Also Read: How to Engage Low-Participation Students with Data, Nudges & Personas?

Wrapping Up

A strong alumni mentorship program goes beyond connections.

It builds a system that improves student readiness, activates alumni, and aligns stakeholders around measurable outcomes.

Career centers scaling these programs often need tools that reduce coordination effort and make outcomes visible. Hiration supports that by connecting student readiness data with counselor workflows and reporting - helping mentoring operate as a structured, measurable part of career services within a secure, FERPA and SOC 2-compliant platform.

The goal is not just to run the program, but to make it sustainable, scalable, and institutionally valuable.