How should career center leaders structure teams, priorities, and data systems for impact?

Career center leaders should build competency-based teams, align priorities directly with institutional goals, manage stakeholders through targeted influence, and establish clear decision rhythms supported by governed data systems. When team development, strategy, and data are integrated, the career center operates as institutional infrastructure rather than a reactive service unit.

Career centers are expected to deliver stronger student outcomes and clearer institutional impact, but many still operate without a defined leadership system.

Staff development is inconsistent, priorities are unclear, stakeholder conversations lack influence, and data is not tied to decisions - leaving teams busy but unable to prove value.

At an institutional level, career services directly affects retention, graduate outcomes, and overall reputation.

Without structured systems, aligning with university strategy and justifying resources becomes difficult.

This guide breaks down five areas that shape how a career center actually operates: team development, priority setting, stakeholder management, decision rhythms, and the leadership pitfalls that limit long-term impact.

How Do You Build a Competency-Based Team Development Plan?

Build the team around observable competencies, not generic job descriptions. Then use Individual Development Plans to connect staff growth to the center’s operating model, mission, and future service design.

Career centers often have capable staff and weak development architecture. People learn through goodwill, crisis, and imitation.

That works for a while, then stalls. Staff do not know what advancement requires, supervisors give inconsistent feedback, and the office struggles to adapt when technology or institutional expectations shift.

Federal guidance on career development is useful here.

Effective Individual Development Plans should include employee profiles, clear short- and long-term goals, development objectives tied to organizational mission, and a documented mix of activities such as classroom learning, web-based training, rotational assignments, and conferences, according to the U.S. Office of Personnel Management’s guidance on career development.

Start with a competency matrix

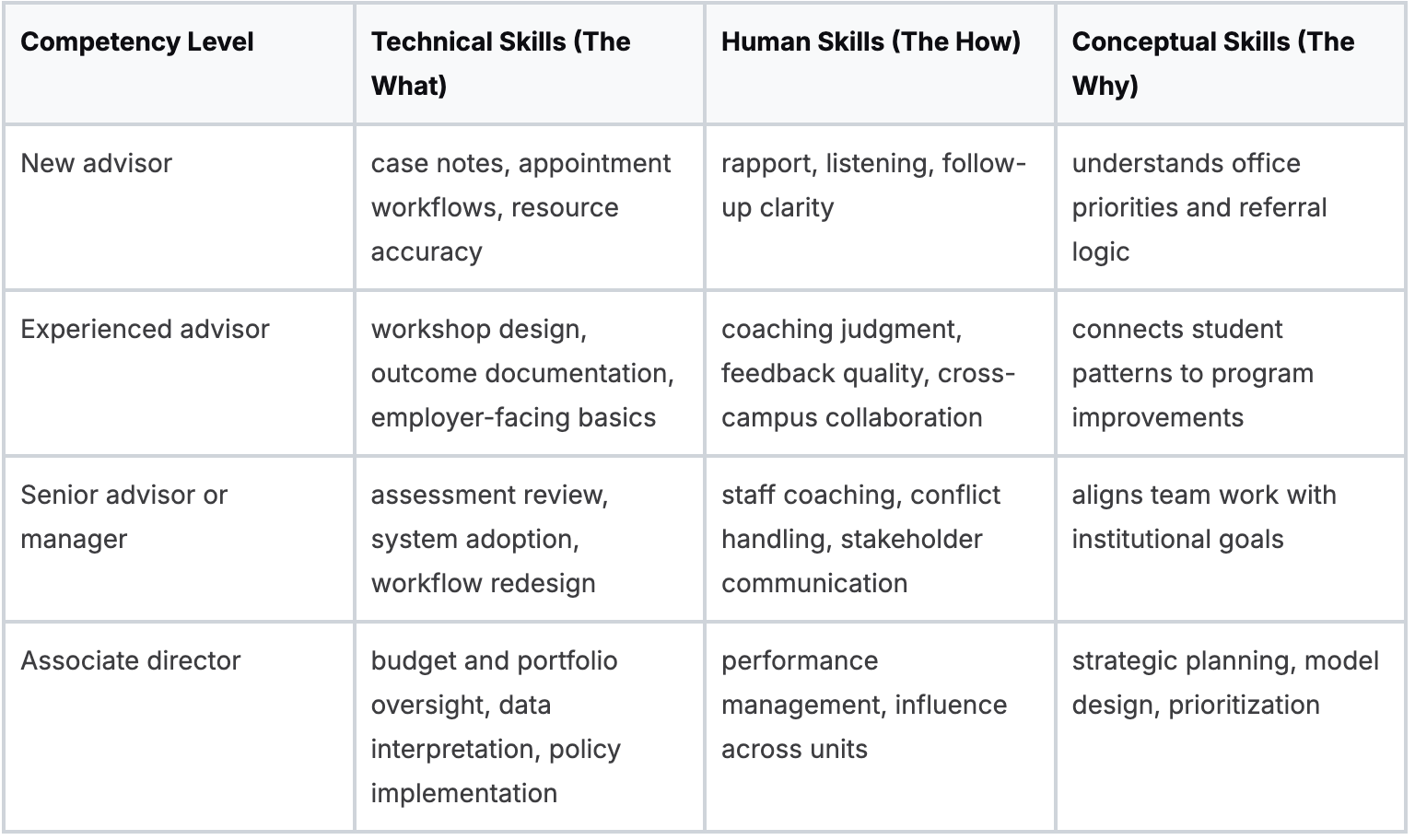

Use Katz's three domains to structure the framework.

This matrix does two things. It tells staff how to grow, and it tells supervisors what evidence to look for.

Also Read: How should universities structure a career center technology stack to support scale and outcomes?

Make IDPs specific enough to manage

A weak IDP reads like a wish list. A useful one names the competency gap, the development activity, the timeline, and the evidence that learning transferred into practice.

For example:

- Technical growth: lead configuration testing for a student engagement workflow

- Human growth: observe two advisors and deliver structured coaching feedback

- Conceptual growth: draft a proposal linking one office initiative to a university priority

The development mix matters. OPM is explicit that growth should include multiple formats, not only formal training. In career centers, that usually means some mix of:

- Formal learning: workshops, association training, policy sessions

- Rotational exposure: short-term ownership of assessment, employer outreach, or first-destination reporting

- Mentorship: internal or external guidance on leadership judgment

- Applied stretch work: a live project with visible consequences

Define coaching quality, not just coaching quantity

Many teams say they want advisors to become stronger coaches, but they never define what “good” looks like.

Observable coaching traits are more useful than personality labels.

Key takeaway: Staff development becomes credible when promotion standards are visible before the vacancy appears.

Tie development to the operating model

A centralized center may prioritize consistency, systems fluency, and shared employer protocols. A federated model needs more partnership management, influence, and contextual judgment inside colleges.

The team architecture should match the organizational architecture.

If you want a practical extension of that design work, this resource on advisor development frameworks in higher ed is a useful next step.

The strongest career center leadership guide is not a checklist for running meetings or approving workshops. It is a system for making choices under institutional constraint.

Structure, priorities, stakeholder narratives, data rhythms, and staff development have to reinforce one another. When they do, the center stops functioning as a busy service unit and starts operating as institutional infrastructure.

Also Read: How should universities structure staffing models for modern career centers?

How Do You Set Priorities That Align with Institutional Goals?

Start with the university’s strategic language, then translate it into a small set of career center outcomes and operating measures that your team can influence.

Most centers say they align with institutional goals. Fewer can show the line from provost language to advisor behavior. That gap is where budget requests get challenged and ad hoc projects multiply.

The practical move is to build a goal-to-metric map. Take one institutional priority at a time. Then ask which student behaviors, service interactions, and outcome measures reasonably connect to that priority.

The Career Center and similar offices highlighted in the CPI best-practices report show what mature alignment looks like.

They track far more than appointment volume, including disaggregated data by college and class, first-destination outcomes, graduate school pathways, repeat use, and the percentage of students referred by friends, according to Best Practices in Career Services Management.

Build the map backward

Start with executive priorities such as retention, equity, alumni engagement, or graduate outcomes. Then work backward.

- Retention priority: focus on early-year student engagement and referral pathways

- Equity priority: disaggregate participation and outcomes across student populations

- Academic integration priority: track faculty-connected programming and embedded touchpoints

- Graduate outcomes priority: connect advising, employer access, and first-destination reporting. Leaders often go wrong by choosing measures because they are easy to count. Appointment totals, event attendance, and workshop volume matter operationally, but they do not answer the senior leader’s question: what changed for students?

Use fewer priorities than you want

Experienced directors get overloaded because they accept every institutional request as strategic. It is not. Some requests are politically important, but they are still side work.

A workable annual plan usually has:

- A few institutional outcomes the center is trying to influence

- A short list of office-level KPIs that indicate progress

- Explicit non-priorities that help staff understand what will not get added this term

Key takeaway: A career center that cannot name its non-priorities becomes a campus convenience office.

A strong dashboard tells a story in layers. Executives see outcome movement. Associate directors see pipeline and population patterns.

Frontline teams see activity and quality signals they can act on quickly.

For leaders building that translation layer, this guide to a career center strategy framework in higher ed is a useful operational complement.

What Does Effective Stakeholder Management Involve?

Effective stakeholder management means translating career services into each stakeholder’s problem set, using evidence they recognize and a cadence they can trust.

Too many leaders treat stakeholder management as updates, committees, and goodwill. That keeps the center visible, but not influential.

Influence starts when career services data solve somebody else’s institutional pressure.

The simplest way to manage this is an influence-interest matrix.

Map provosts, deans, faculty, enrollment leaders, employers, alumni teams, and student affairs partners by two questions: how much institutional power do they hold, and how much do they currently care about your outcomes?

Tailor the narrative by stakeholder

The same evidence should not be presented the same way to every audience.

According to NACE, graduates who use career services receive 1.8 job offers on average, compared with 1.0 for non-users, a difference career center leaders can use to communicate value beyond basic engagement counts in The Value of Career Services.

That statistic lands differently depending on the room:

- Provost: institutional contribution to graduate outcomes and student value

- Dean: program-level employability and student demand in a specific college

- Board member: visible return on institutional investment

- Faculty leader: evidence that career services reinforces, rather than competes with, academic goals

Also Read: How Can Career Centers Evolve for a Skills-First, AI-Driven Job Market?

Separate allies from sponsors

An ally says positive things about your office. A sponsor allocates resources, opens doors, or changes policy barriers. Good leaders know the difference.

A dean who likes your internship programming but never makes space in the curriculum is an ally. A dean who asks department chairs to include career touchpoints in key courses is a sponsor.

That distinction changes how you spend time. If a stakeholder has high influence and low current interest, they need concise evidence and a low-friction ask.

If they have high interest and low influence, they may be a coalition partner.

Make faculty partnership concrete

Faculty relationships often fail because career centers ask for “collaboration” in the abstract. Better asks are specific:

- review an employer panel list for curricular fit

- nominate a gateway course for embedded career content

- identify one point in the semester where career content belongs

- surface which professional norms students in the major routinely miss

For leaders trying to strengthen that dimension, this resource on faculty partnerships in higher ed offers a practical lens on structuring those conversations.

Tip: The meeting is not the deliverable. The policy change, referral pathway, or shared metric is the deliverable.

How Can Leaders Establish Effective Data-Driven Decision Rhythms?

Leaders need a fixed cadence that separates daily operations from monthly performance review and quarterly strategic adjustment, supported by clear data ownership and privacy rules.

Many centers say they are data-driven when they really mean they have reports. Decision rhythm is different. It is the operating pattern that tells staff when data are reviewed, who interprets them, and which decisions follow.

Without that rhythm, every issue feels urgent. Staff meetings become a blend of anecdotes, system complaints, and random brainstorming.

Also Read: How should career centers structure analytics to measure real student outcomes?

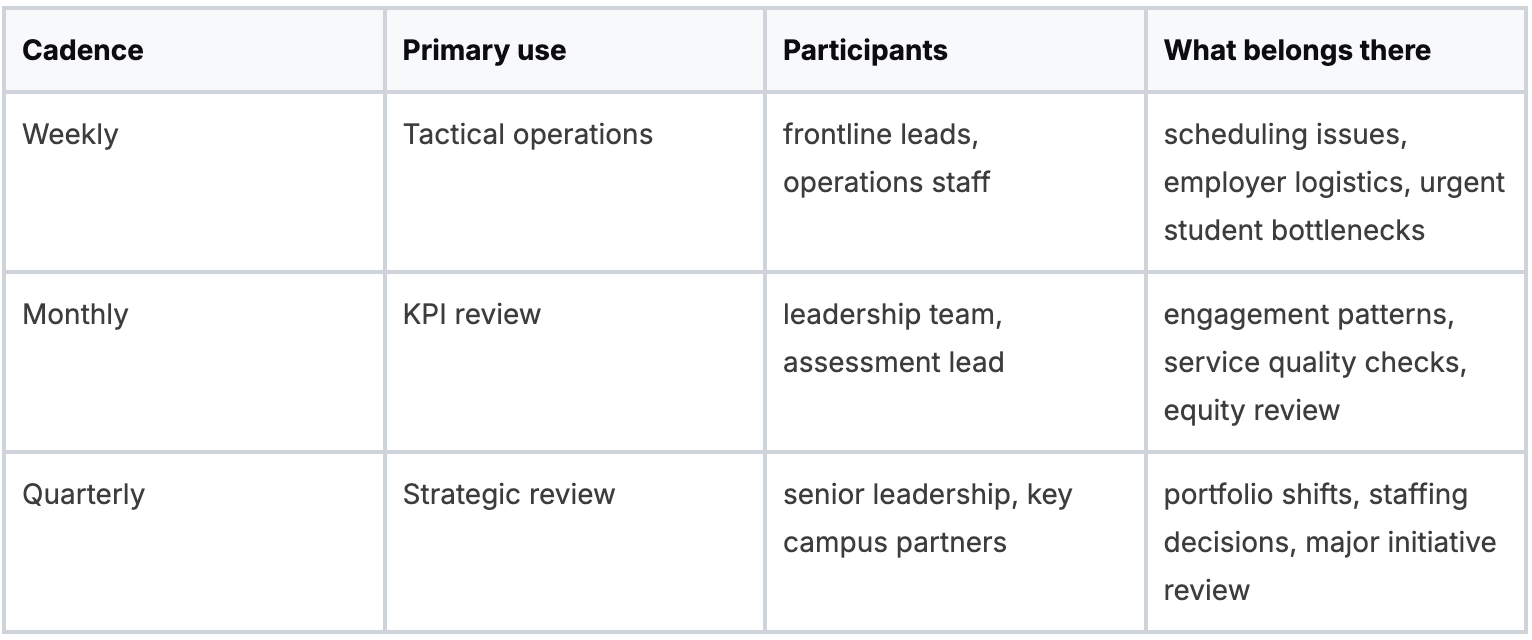

Use different cadences for different decisions

A single meeting cannot serve every purpose.

The discipline matters more than the format. Weekly meetings should not drift into strategy. Quarterly reviews should not get consumed by event troubleshooting.

Treat data governance as leadership work

Data governance often gets pushed to the systems person. That is too narrow.

Career center leaders need clear rules on:

- Definition control: what counts as engagement, referral, employer activity, and outcome

- Access permissions: who can view student-level information and who only sees aggregate reporting

- Privacy standards: FERPA and student privacy safeguards in reporting, exports, and vendor workflows

- Retention practices: what is stored, where it lives, and when it is removed

If these choices are vague, your center will eventually produce conflicting reports to different audiences. That damages credibility faster than weak outcomes.

Choose technology for institutional fit

Feature comparisons are less important than operating fit. In higher education, a tool succeeds when it integrates cleanly, protects student data, and has an implementation model your staff can absorb.

The right questions are practical:

- does it support SSO or SAML

- can it align with current campus systems

- what implementation support is included

- what security documentation can the vendor provide

- what staff workflow changes are required

Whether a center chooses a platform, the decision should be based on governance, interoperability, and staff adoption requirements rather than novelty.

A useful companion resource is this guide to career center dashboard best practices, especially when you are deciding what should be visible at each management level.

Key takeaway: A dashboard does not improve decision quality unless someone owns the meeting where its numbers are interpreted.

What Are the Most Common but Least Discussed Leadership Pitfalls?

The hardest leadership failures are subtle. They look like competence from the inside while steadily weakening strategic impact, team trust, and equity of service.

The first pitfall is mistaking smooth operations for institutional value. A center can run on time, answer email quickly, and produce polished events while failing to influence retention, outcomes, or academic strategy.

Efficient activity is still activity.

The second is scaling before proving efficacy.

Leaders often expand a program because the first round felt well received. If you have not established what success looks like and whether the intervention worked across student groups, scaling mostly multiplies ambiguity.

Change fatigue usually starts at the middle

Most career center change efforts fail in the layer between director and frontline advisor. Senior leaders announce a new platform, a new reporting model, or a new employer strategy.

Frontline staff absorb the extra work. Middle managers carry the confusion.

That is why leadership development matters.

Katz's model is useful here because career center managers need technical skill, human skill, and conceptual skill in balance.

In practice, many are promoted for advising excellence and then asked to manage systems, coach staff, and make strategic trade-offs without support.

Also Read: Career Services Benchmarks: KPI Targets for Career Centers in 2026

Bias is a leadership risk, not a side issue

One of the least discussed failures is unexamined bias in service delivery and supervision. This is not only about values. It directly affects whether students trust the center enough to return.

The challenge is sharper than many leaders admit.

A post from the Career Leadership Collective argues that when leaders allow bias to shape guidance, students from underserved populations can disengage entirely, and that mainstream leadership guidance rarely offers structured self-audit or accountability on bias in career services practice, as discussed in Check Your Bias at the Career Center Door.

That has practical implications:

- a staff member may over-direct some students and under-challenge others

- employer preparation may assume familiarity with professional norms students were never taught

- “polish” may become coded language that hides classed or racialized expectations

- outreach plans may favor already-confident student populations

Tip: If underrepresented students stop returning after first contact, treat that as a service quality signal, not a student motivation problem.

Run a leadership self-audit

A useful self-audit asks:

- Where do we confuse fairness with sameness?

- Which student groups receive the most relational follow-up?

- Where do professional norms remain unstated in our programming?

- Which staff decisions are based on evidence, and which are based on instinct dressed up as expertise?

Loyola Marymount University is often cited in discussion of structural approaches to partnership work that avoid assumptions based on identity.

The broader lesson is that equity work improves when leaders move from aspiration to operating design.

Also Read: Which career center metrics should universities track to prove real student outcomes?

Wrapping Up

Strong career center leadership is not defined by activity levels, but by the systems that hold everything together - how teams develop, how priorities are chosen, how decisions are made, and how outcomes are demonstrated.

When these elements reinforce each other, the center moves from reactive service delivery to a more stable, institutionally aligned model.

For teams working to operationalize that shift, having the right infrastructure in place becomes critical.

This is where Hiration can support your execution - bringing together career assessments, AI-powered resume optimization, interview simulation, and a dedicated counselor module to manage cohorts, workflows, and analytics, all within a secure, FERPA and SOC 2-compliant environment.

The goal is not to add more tools, but to build a system where staff effort, student experience, and institutional outcomes are connected, consistent, and measurable.

Career Center Leadership — FAQs

What defines a strong career center leadership model?

A strong model connects team development, strategic priorities, stakeholder influence, and data systems into one cohesive operating structure that supports institutional outcomes.

Why should teams be built around competencies instead of roles?

Competency-based structures clarify expectations, standardize development, and create measurable criteria for performance, growth, and promotion across the team.

How do career centers align priorities with university strategy?

Leaders translate institutional goals like retention or graduate outcomes into specific student behaviors, service interactions, and measurable KPIs the career center can influence.

What is the biggest mistake in priority setting?

Trying to do too much. Without clearly defined priorities and non-priorities, teams become reactive and lose focus on high-impact institutional outcomes.

How should career centers manage stakeholders effectively?

They should tailor messaging to each stakeholder’s priorities, distinguish between allies and decision-makers, and focus on outcomes that solve institutional problems.

What are data-driven decision rhythms in career services?

These are structured cadences, weekly, monthly, and quarterly, that define when data is reviewed, who interprets it, and what decisions are made based on it.

Why is data governance important for leadership?

Clear definitions, access controls, and privacy standards ensure consistent reporting, protect student data, and maintain institutional credibility.

What are common leadership pitfalls in career centers?

Common issues include focusing on activity over outcomes, scaling programs without proof of effectiveness, and lacking structured approaches to equity and bias in service delivery.