How should career centers implement AI copilots without losing control?

Career centers should implement AI copilots as part of a structured advising system, not as isolated tools. Effective use requires clear workflows across pre-, during, and post-session stages, defined handoff rules to human advisors, strong data privacy safeguards, and a governance framework that ensures consistency, compliance, and measurable impact.

Career centers are under pressure to scale personalized advising while navigating a surge in AI tools, yet most implementations remain fragmented.

Teams adopt chatbots, resume tools, and copilots in isolation, often adding complexity instead of reducing workload.

At an institutional level, this directly affects student outcomes, data privacy, and efficiency. Without a structured approach, AI can introduce risk instead of impact.

This guide outlines how to implement AI copilots across the advising lifecycle - pre-, during, and post-session, while maintaining governance, clear handoffs, and institutional control.

How do AI copilots assist across different career advising stages?

AI copilots streamline advising by handling administrative intake pre-session, summarizing action items during meetings, and managing personalized follow-ups post-session. They map aptitudes to careers during early exploration and generate tailored mock interview questions during the preparation stage, freeing you for high-impact mentoring.

According to a late 2025 NACE Quick Poll, 76% of college career centers now use AI tools with students, a massive jump from just 20% in 2023.

To leverage this effectively, career services professionals must integrate AI Career Advising Assistants strategically across the entire student journey:

- Pre-Session (Intake & Triage): Deploy AI chatbots to handle 24/7 scheduling requests and basic resume formatting queries. Tools like Creatrix Campus virtual assistants capture student intent, graduation timelines, and major details before they walk through your door, giving advisors immediate context.

- In-Session (Documentation): Utilize AI transcription and summarization tools to document the meeting in real-time. This allows you to stay fully present and empathetic with the student instead of frantically typing notes.

- Post-Session (Action & Follow-Up): AI generates tactical action plans. For instance, the University of Richmond actively guides students to use AI to generate up to 12 potential behavioral interview questions using the STAR method, tailored to their specific resume experiences. Furthermore, you can automate post-experience data collection. The Queens College Experiential Education Office uses the NACE Competency Assessment Tool to quantify specific skill gains students make during internships. AI copilots can seamlessly administer these assessments post-internship to track long-term outcomes.

Also Read: How should career centers evaluate career tech platforms before committing?

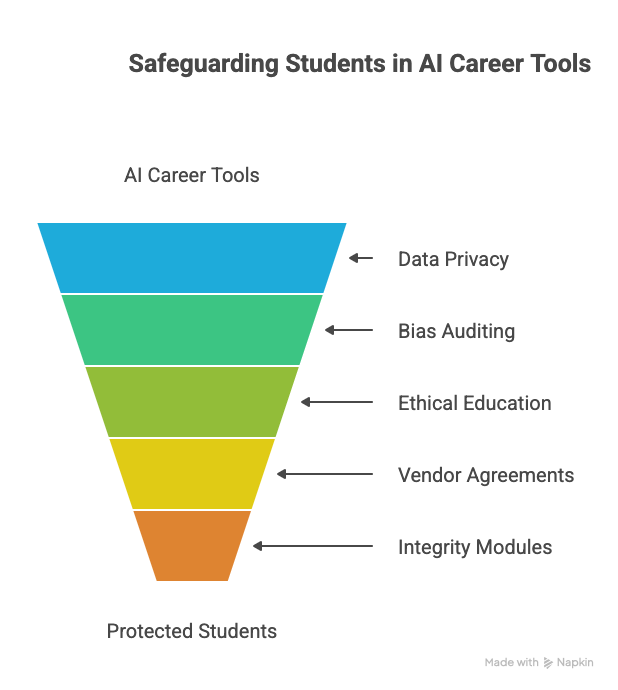

What safeguards protect students using AI career tools?

Effective safeguards prevent demographic bias, protect personal data, and ensure academic integrity. You must implement strict data privacy rules, audit AI models for biased career routing, and aggressively educate your students on the ethical boundaries of generative AI to prevent misrepresentation and outright plagiarism.

Deploying AI without guardrails risks exposing student data and reinforcing historical workforce biases.

NACE reports that 40% of career centers hold deep concerns about the ethical implications of AI, while 27% worry specifically about AI collecting personal data on students.

To protect your students, secure enterprise-level agreements with AI vendors that guarantee zero data retention, ensuring the models do not train on your students’ personally identifiable information (PII).

Next, tackle the integrity issue head-on. According to OneGoal, you must explicitly teach students the difference between using AI as a brainstorming partner versus fabricating an entire cover letter.

Currently, only 35% of career centers run AI workshops for students. Build mandatory modules that outline acceptable use, so students understand how to leverage AI for research without crossing the line into application fraud.

Also Read: What guardrails do career centers need for AI use in student job preparation?

When should an AI bot hand off to a human advisor?

AI must instantly hand off to a human when a student faces emotional distress, mentions mental health crises, or requires deeply nuanced, non-linear career mapping. Establish hardcoded trigger words and conditional autonomy protocols so bots seamlessly escalate sensitive, unresolved, or complex issues to staff.

Treat AI autonomy on a spectrum, not as a simple binary. According to Trackmind’s framework on AI agent handoff protocols, organizations should use "Conditional Autonomy," where an AI agent operates independently within strict boundaries but automatically escalates edge cases to a human reviewer.

Apply this directly to your career center. If a student asks an AI Career Advising Assistant, "What is the median starting salary for an entry-level accountant?", the bot handles it.

But if a student types, "I'm failing my major, losing my scholarship, and don't know what to do," the system must trigger an immediate human handoff.

Define a robust set of natural language processing (NLP) triggers for stress, crisis, or complex identity-related queries - such as navigating workplace accommodations for a disability.

Let the bot clear the administrative queue, but tightly ringfence human empathy for your licensed staff.

Also Read: AI in Career Services: Benefits, Limits, and Ethical Best Practices

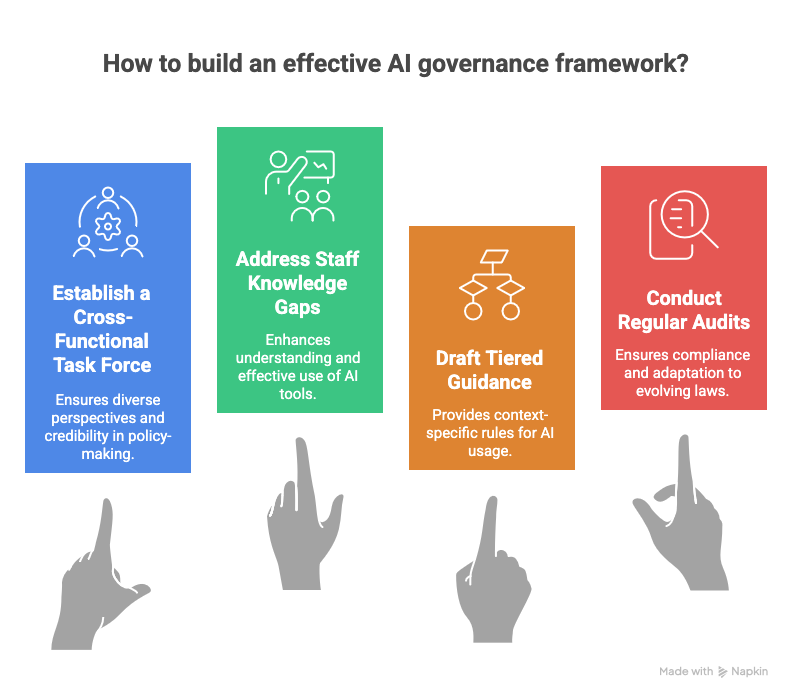

How do we build an effective AI governance framework?

Establish a cross-functional AI task force comprising career services, IT, legal, and student representatives. This body dictates tiered usage policies, mandates continuous staff training, and conducts regular bias audits to ensure your AI deployments remain legally compliant and perfectly aligned with institutional values.

Never leave AI governance solely to the IT department or a single tech-savvy career counselor.

According to FeedbackFruits, effective AI governance in higher education requires institutional infrastructure and a cross-functional task force to build policies that carry genuine credibility.

Start by addressing your own team's knowledge gaps. The 2025 NACE Quick Poll reveals that a massive 64% of centers cite a "lack of staff expertise" as the primary barrier to AI adoption, yet only 40% provide AI workshops for their internal staff.

You cannot govern what your team does not understand. Implement mandatory, ongoing training on AI capabilities.

From there, draft tiered guidance based on context rather than issuing blanket bans.

Clearly define which generative models are permissible for resume reviews versus dedicated platforms like YouScience for aptitude discovery.

Regular, quarterly governance audits will ensure your tools adapt safely to evolving data privacy laws and shifting job market realities.

Also Read: How can career services teams build FERPA-safe systems without slowing student support

Also Read: How should universities structure a career center technology stack to support scale and outcomes?

Wrapping Up

AI copilots are only as effective as the system they operate within. Without clear workflows, governance, and integration across the student journey, even the most advanced tools struggle to deliver meaningful outcomes.

Career centers that are seeing real impact are not just adopting AI - they are embedding it across advising, standardizing how it supports students, and ensuring advisors stay focused on high-value, human interactions.

The shift is less about adding tools and more about building a connected, scalable ecosystem.

Hiration is designed with this in mind. Instead of stitching together multiple point solutions, it brings career assessments, resume optimization, interview simulation, and more into a single system - alongside a dedicated counselor module for managing cohorts, workflows, and analytics.

The result is a more structured, institution-ready approach to scaling career readiness, backed by enterprise-grade security and compliance.

As AI adoption accelerates, the advantage will come from how intentionally it is implemented.

Career centers that take a systems-first approach will be better positioned to scale support, maintain control, and deliver measurable outcomes for their students.

AI Copilots for Career Advising — FAQs

What are AI copilots in career advising?

AI copilots are tools that assist advisors by handling intake, summarizing sessions, generating follow-ups, and supporting student preparation tasks across the advising lifecycle.

How do AI copilots support different advising stages?

They assist pre-session with intake and scheduling, during sessions with documentation, and post-session with action plans, follow-ups, and progress tracking.

Why do many AI implementations fail in career centers?

Many implementations are fragmented, with tools adopted in isolation. Without integration into workflows and governance, they increase complexity instead of improving efficiency.

What safeguards are needed when using AI in career services?

Career centers must protect student data, prevent bias in recommendations, and ensure ethical use by defining clear policies, auditing systems, and educating students on responsible AI use.

When should AI systems hand off to human advisors?

AI should escalate to human advisors when students show emotional distress, face complex decisions, or require nuanced, context-sensitive guidance beyond standard workflows.

What is an AI governance framework in career services?

It is a structured approach involving policies, training, audits, and cross-functional oversight to ensure AI tools are used responsibly, securely, and effectively across the institution.

Who should be involved in AI governance?

Governance should include career services, IT, legal teams, and student representatives to ensure alignment with institutional goals and compliance requirements.

What is the biggest benefit of AI copilots for career centers?

The biggest benefit is improved efficiency, allowing advisors to focus on high-impact mentoring while AI handles repetitive administrative and analytical tasks.

What is the biggest risk of AI copilots?

The biggest risk is loss of control over data, bias in recommendations, and over-reliance on automation without proper oversight and governance.

How can career centers ensure successful AI adoption?

Success depends on integrating AI into workflows, defining clear use cases, training staff, maintaining governance, and continuously monitoring impact and outcomes.